高级功能 消息存储 分布式队列因为有高可靠性的要求,所以数据要进行持久化存储。

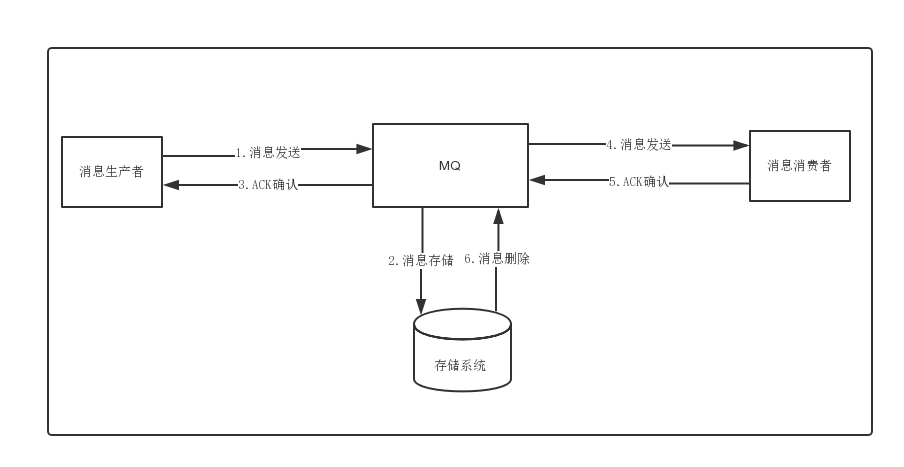

消息生成者发送消息

MQ收到消息,将消息进行持久化,在存储中新增一条记录

返回ACK给生产者

MQ push 消息给对应的消费者,然后等待消费者返回ACK

如果消息消费者在指定时间内成功返回ack,那么MQ认为消息消费成功,在存储中删除消息,即执行第6步;如果MQ在指定时间内没有收到ACK,则认为消息消费失败,会尝试重新push消息,重复执行4、5、6步骤

MQ删除消息

存储介质

Apache下开源的另外一款MQ—ActiveMQ(默认采用的KahaDB做消息存储)可选用JDBC的方式来做消息持久化,通过简单的xml配置信息即可实现JDBC消息存储。由于,普通关系型数据库(如Mysql)在单表数据量达到千万级别的情况下,其IO读写性能往往会出现瓶颈。在可靠性方面,该种方案非常依赖DB,如果一旦DB出现故障,则MQ的消息就无法落盘存储会导致线上故障

性能对比 文件系统>关系型数据库DB

消息的存储和发送 消息存储 磁盘如果使用得当,磁盘的速度完全可以匹配上网络 的数据传输速度。目前的高性能磁盘,顺序写速度可以达到600MB/s, 超过了一般网卡的传输速度。但是磁盘随机写的速度只有大概100KB/s,和顺序写的性能相差6000倍!因为有如此巨大的速度差别,好的消息队列系统会比普通的消息队列系统速度快多个数量级。RocketMQ的消息用顺序写,保证了消息存储的速度。

消息发送 Linux操作系统分为【用户态】和【内核态】,文件操作、网络操作需要涉及这两种形态的切换,免不了进行数据复制。

一台服务器 把本机磁盘文件的内容发送到客户端,一般分为两个步骤:

1)read;读取本地文件内容;

2)write;将读取的内容通过网络发送出去。

这两个看似简单的操作,实际进行了4 次数据复制,分别是:

从磁盘复制数据到内核态内存;

从内核态内存复 制到用户态内存;

然后从用户态 内存复制到网络驱动的内核态内存;

最后是从网络驱动的内核态内存复 制到网卡中进行传输。

通过使用mmap的方式,可以省去向用户态的内存复制,提高速度。这种机制在Java中是通过MappedByteBuffer实现的

RocketMQ充分利用了上述特性,也就是所谓的“零拷贝”技术,提高消息存盘和网络发送的速度。

> 这里需要注意的是,采用MappedByteBuffer这种内存映射的方式有几个限制,其中之一是一次只能映射1.5~2G 的文件至用户态的虚拟内存,这也是为何RocketMQ默认设置单个CommitLog日志数据文件为1G的原因了

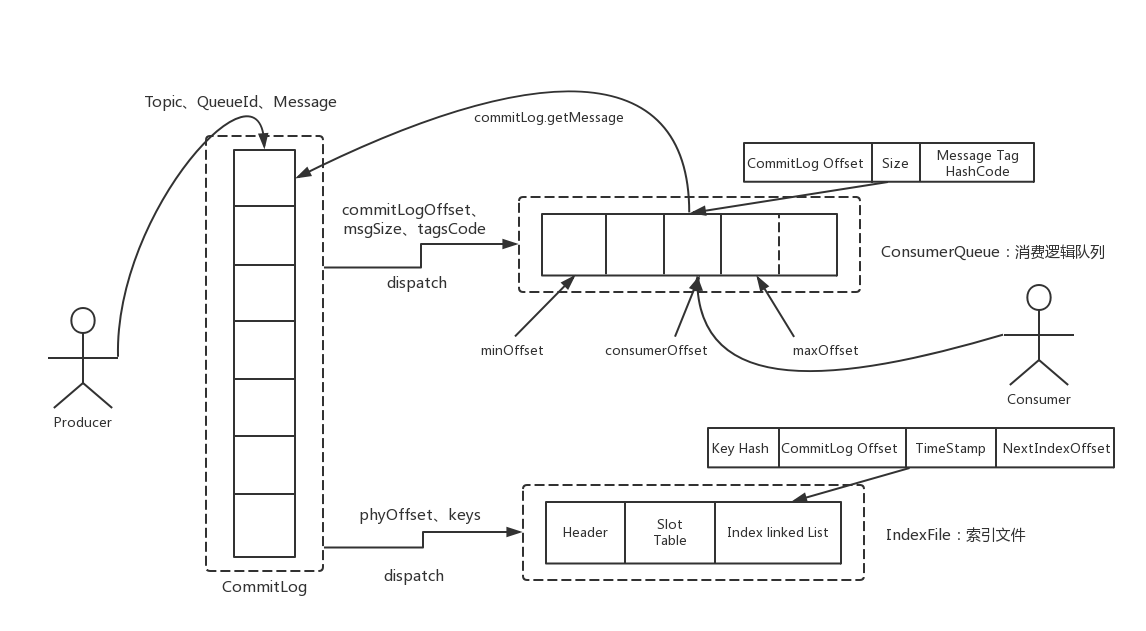

### 消息存储结构

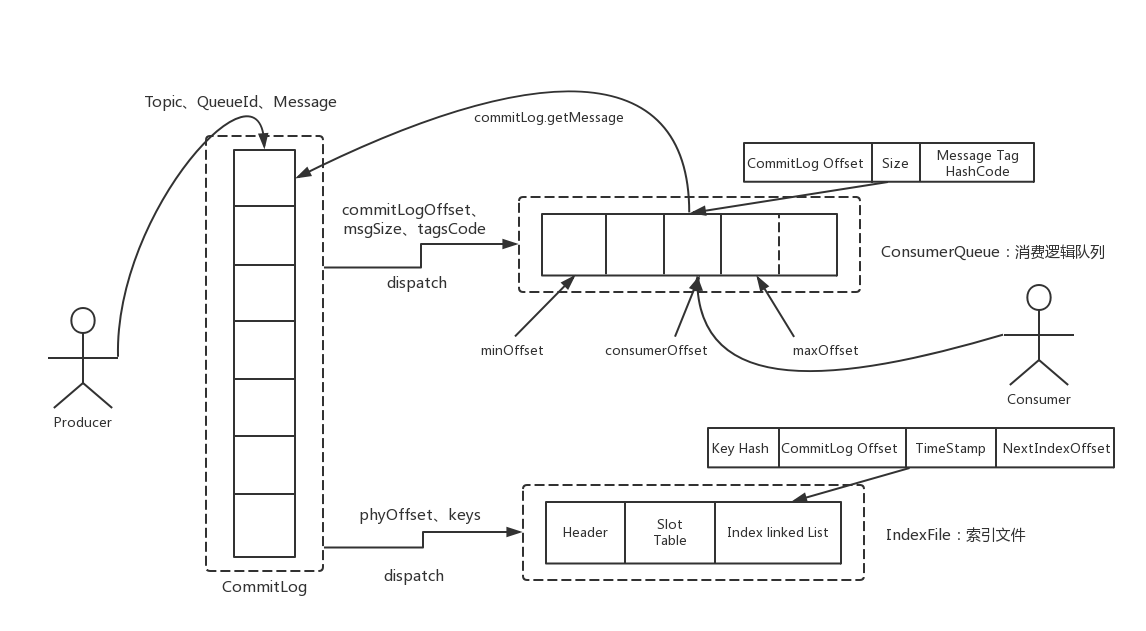

RocketMQ消息的存储是由ConsumeQueue和CommitLog配合完成 的,消息真正的物理存储文件是CommitLog,ConsumeQueue是消息的逻辑队列,类似数据库的索引文件,存储的是指向物理存储的地址。每 个Topic下的每个Message Queue都有一个对应的ConsumeQueue文件。

CommitLog:存储消息的元数据

ConsumerQueue:存储消息在CommitLog的索引

IndexFile:为了消息查询提供了一种通过key或时间区间来查询消息的方法,这种通过IndexFile来查找消息的方法不影响发送与消费消息的主流程

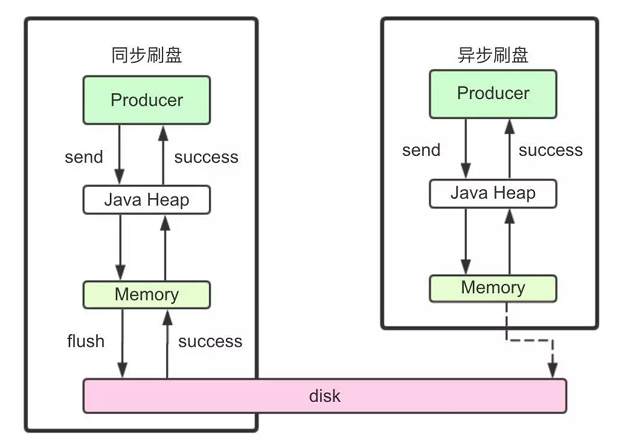

刷盘机制 RocketMQ的消息是存储到磁盘上的,这样既能保证断电后恢复, 又可以让存储的消息量超出内存的限制。RocketMQ为了提高性能,会尽可能地保证磁盘的顺序写。消息在通过Producer写入RocketMQ的时 候,有两种写磁盘方式,分布式同步刷盘和异步刷盘。

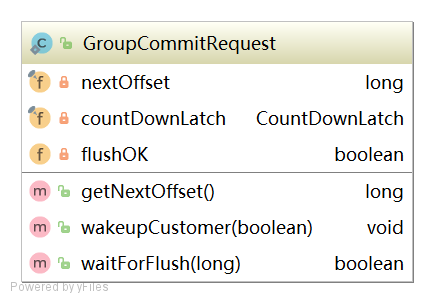

同步刷盘 在返回写成功状态时,消息已经被写入磁盘。具体流程是,消息写入内存的PAGECACHE后,立刻通知刷盘线程刷盘, 然后等待刷盘完成,刷盘线程执行完成后唤醒等待的线程,返回消息写 成功的状态。

异步刷盘 在返回写成功状态时,消息可能只是被写入了内存的PAGECACHE,写操作的返回快,吞吐量大;当内存里的消息量积累到一定程度时,统一触发写磁盘动作,快速写入。

配置 同步刷盘还是异步刷盘,都是通过Broker配置文件里的flushDiskType 参数设置的,这个参数被配置成SYNC_FLUSH、ASYNC_FLUSH中的 一个。

高可用性机制

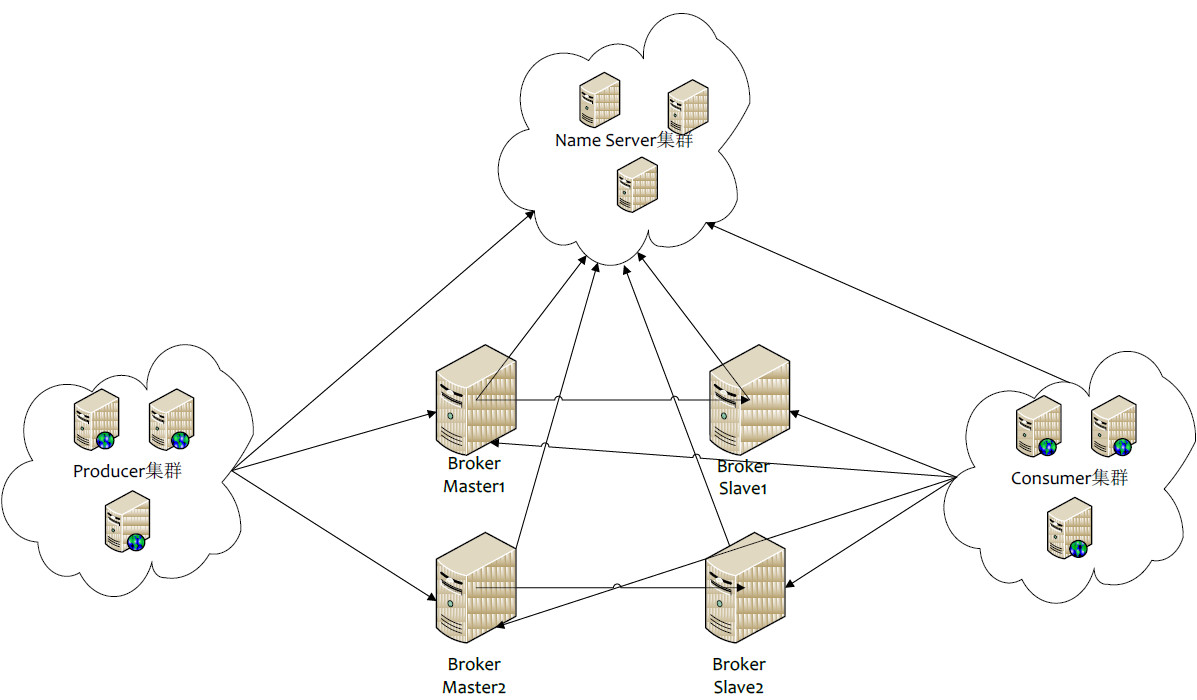

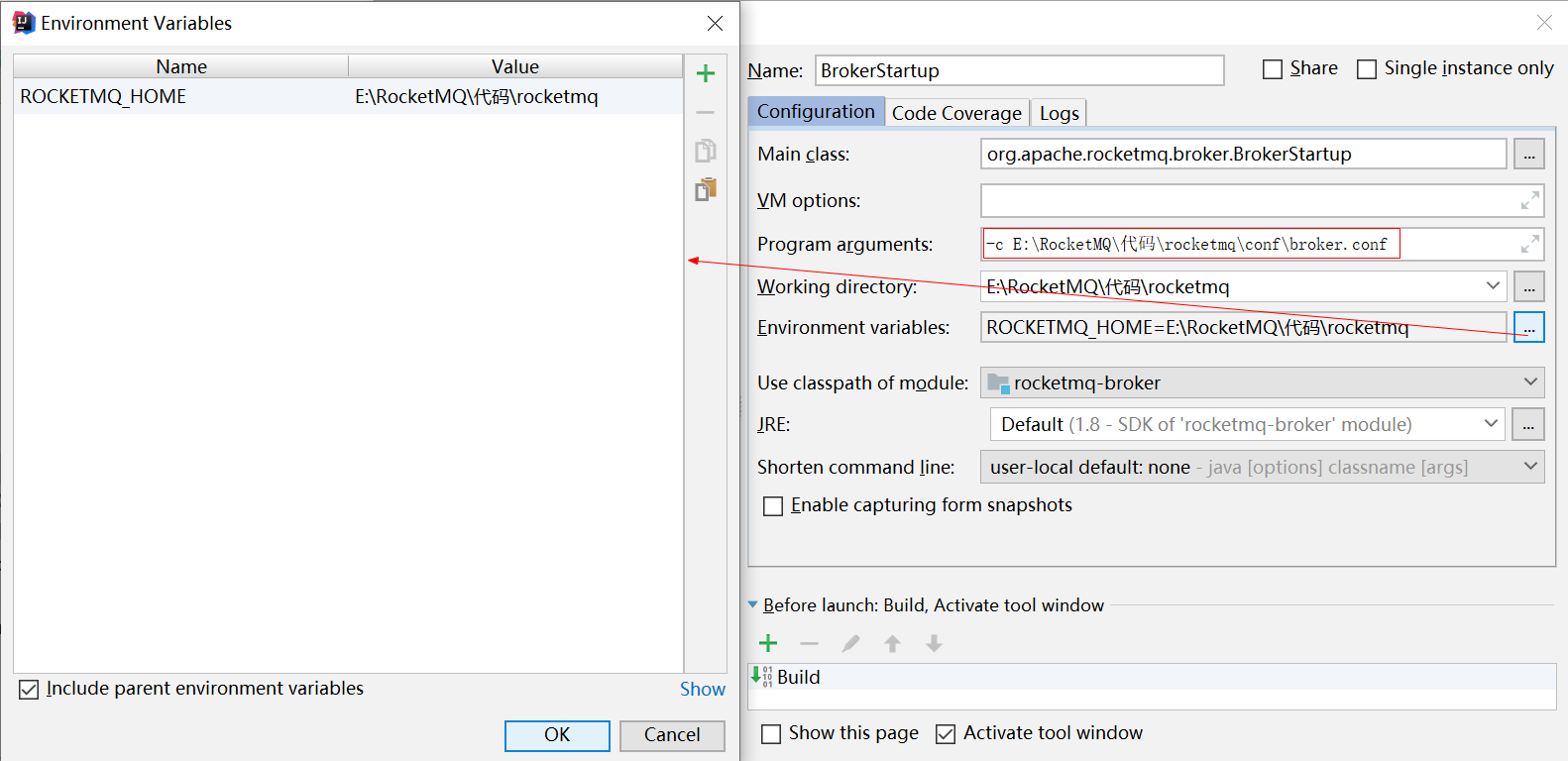

RocketMQ分布式集群是通过Master和Slave的配合达到高可用性的。

Master和Slave的区别:在Broker的配置文件中,参数 brokerId的值为0表明这个Broker是Master,大于0表明这个Broker是 Slave,同时brokerRole参数也会说明这个Broker是Master还是Slave。

Master角色的Broker支持读和写,Slave角色的Broker仅支持读,也就是 Producer只能和Master角色的Broker连接写入消息;Consumer可以连接 Master角色的Broker,也可以连接Slave角色的Broker来读取消息。

消息消费高可用 在Consumer的配置文件中,并不需要设置是从Master读还是从Slave 读,当Master不可用或者繁忙的时候,Consumer会被自动切换到从Slave 读。有了自动切换Consumer这种机制,当一个Master角色的机器出现故障后,Consumer仍然可以从Slave读取消息,不影响Consumer程序。这就达到了消费端的高可用性。

消息发送高可用 在创建Topic的时候,把Topic的多个Message Queue创建在多个Broker组上(相同Broker名称,不同 brokerId的机器组成一个Broker组),这样当一个Broker组的Master不可 用后,其他组的Master仍然可用,Producer仍然可以发送消息。 RocketMQ目前还不支持把Slave自动转成Master,如果机器资源不足, 需要把Slave转成Master,则要手动停止Slave角色的Broker,更改配置文 件,用新的配置文件启动Broker。

消息主从复制 如果一个Broker组有Master和Slave,消息需要从Master复制到Slave 上,有同步和异步两种复制方式。

同步复制 同步复制方式是等Master和Slave均写 成功后才反馈给客户端写成功状态;

在同步复制方式下,如果Master出故障, Slave上有全部的备份数据,容易恢复,但是同步复制会增大数据写入 延迟,降低系统吞吐量。

异步复制 异步复制方式是只要Master写成功 即可反馈给客户端写成功状态。

在异步复制方式下,系统拥有较低的延迟和较高的吞吐量,但是如果Master出了故障,有些数据因为没有被写 入Slave,有可能会丢失;

配置 同步复制和异步复制是通过Broker配置文件里的brokerRole参数进行设置的,这个参数可以被设置成ASYNC_MASTER、 SYNC_MASTER、SLAVE三个值中的一个。

总结

实际应用中要结合业务场景,合理设置刷盘方式和主从复制方式, 尤其是SYNC_FLUSH方式,由于频繁地触发磁盘写动作,会明显降低 性能。通常情况下,应该把Master和Save配置成ASYNC_FLUSH的刷盘 方式,主从之间配置成SYNC_MASTER的复制方式,这样即使有一台 机器出故障,仍然能保证数据不丢,是个不错的选择。

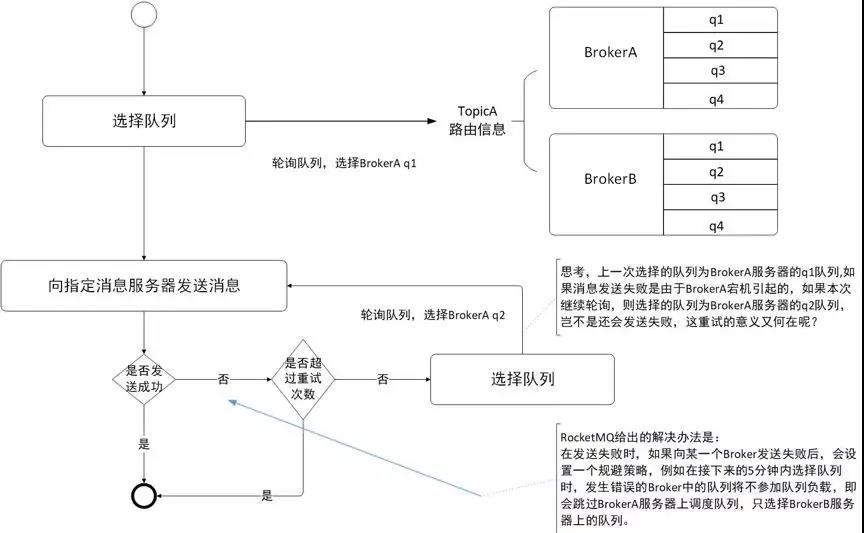

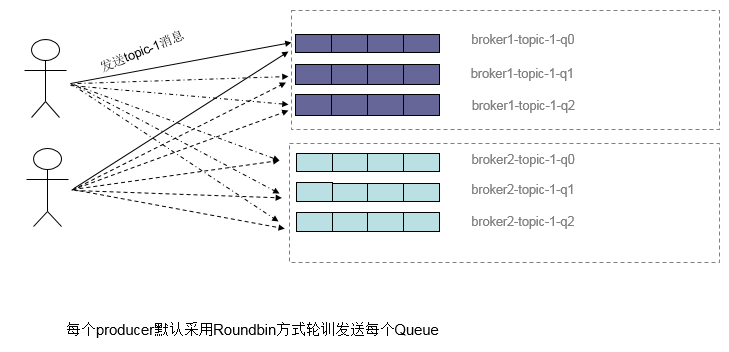

负载均衡 Producer负载均衡 Producer端,每个实例在发消息的时候,默认会轮询所有的message queue发送,以达到让消息平均落在不同的queue上。而由于queue可以散落在不同的broker,所以消息就发送到不同的broker下,如下图:

图中箭头线条上的标号代表顺序,发布方会把第一条消息发送至 Queue 0,然后第二条消息发送至 Queue 1,以此类推。

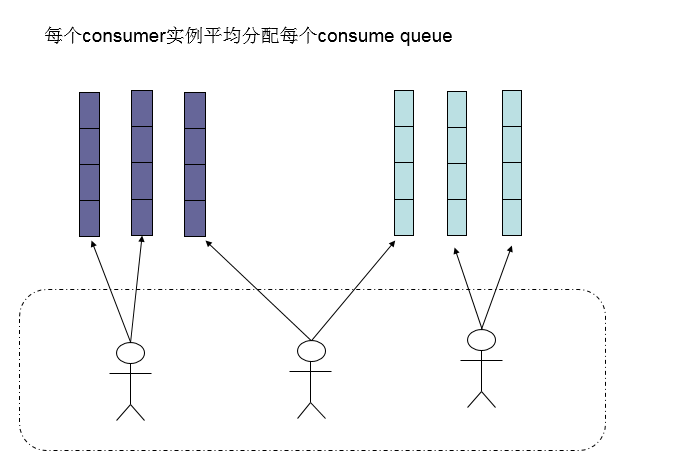

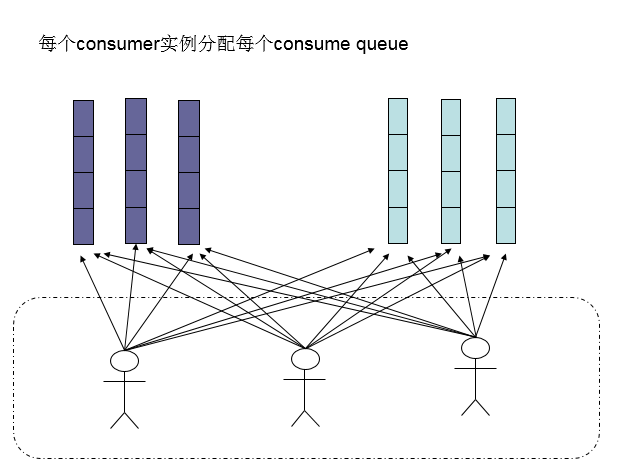

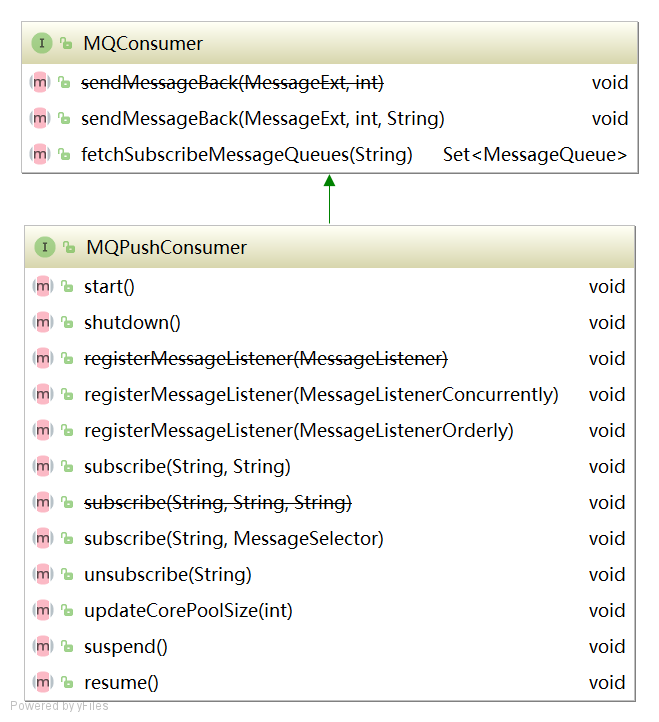

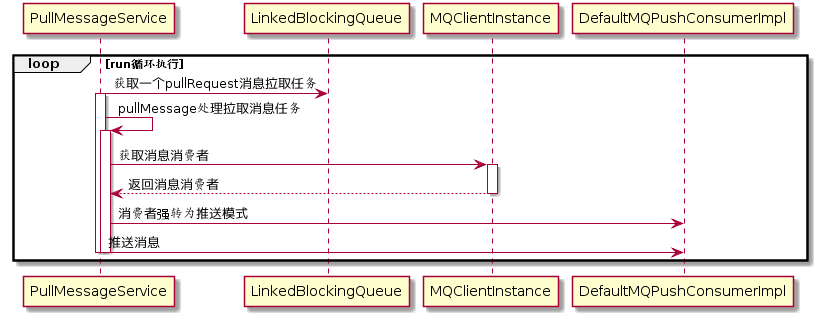

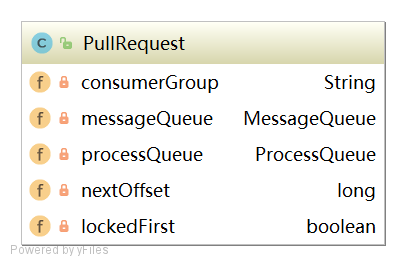

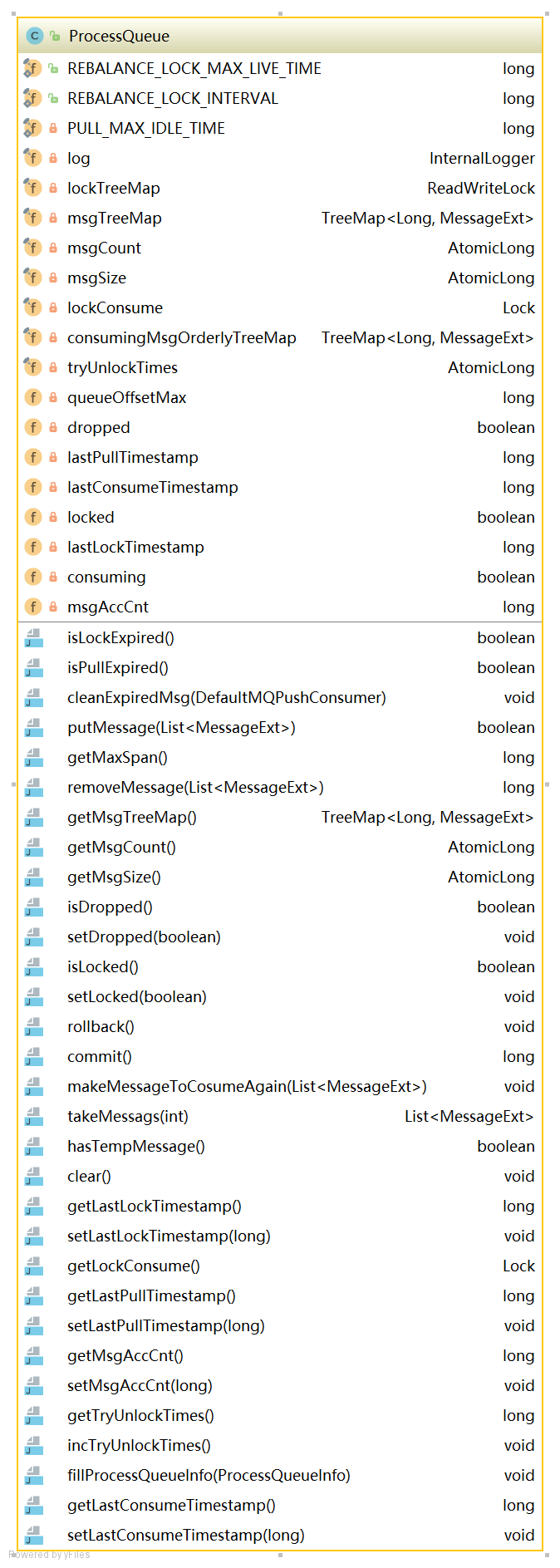

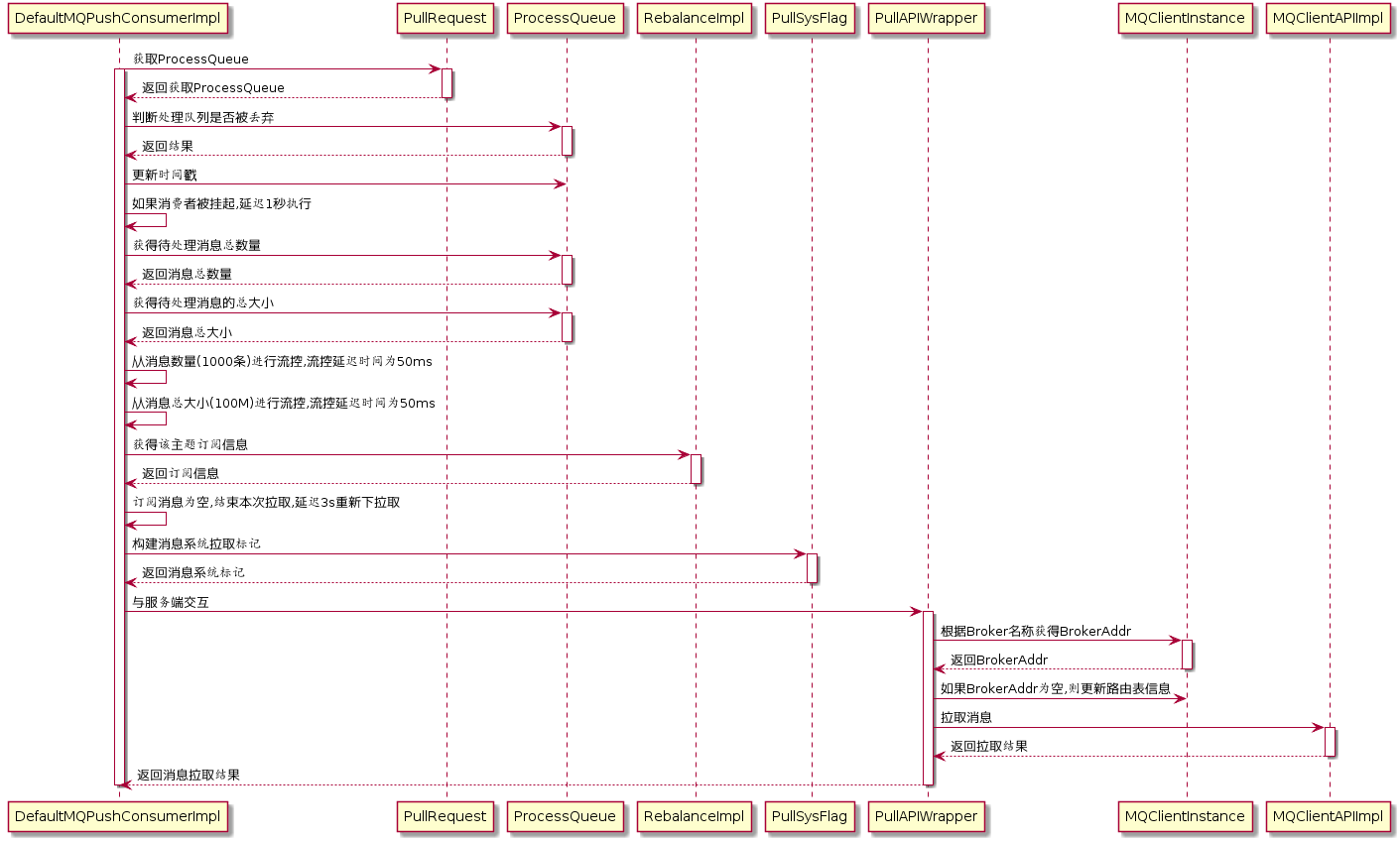

Consumer负载均衡 集群模式 在集群消费模式下,每条消息只需要投递到订阅这个topic的Consumer Group下的一个实例即可。RocketMQ采用主动拉取的方式拉取并消费消息,在拉取的时候需要明确指定拉取哪一条message queue。

而每当实例的数量有变更,都会触发一次所有实例的负载均衡,这时候会按照queue的数量和实例的数量平均分配queue给每个实例。

默认的分配算法是AllocateMessageQueueAveragely,如下图:

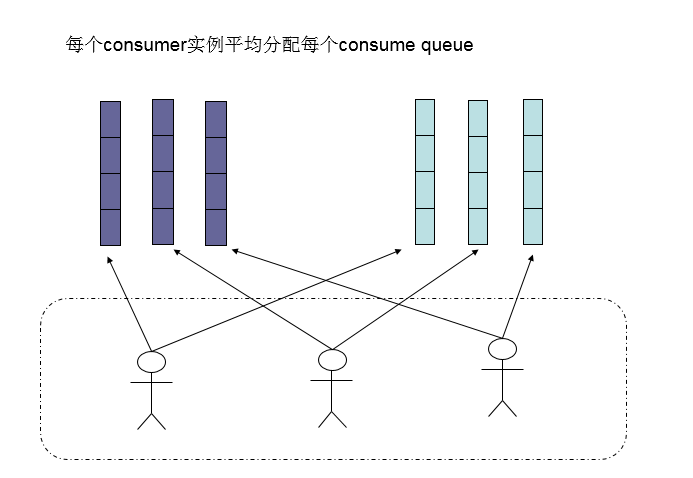

还有另外一种平均的算法是AllocateMessageQueueAveragelyByCircle,也是平均分摊每一条queue,只是以环状轮流分queue的形式,如下图:

需要注意的是,集群模式下,queue都是只允许分配只一个实例,这是由于如果多个实例同时消费一个queue的消息,由于拉取哪些消息是consumer主动控制的,那样会导致同一个消息在不同的实例下被消费多次,所以算法上都是一个queue只分给一个consumer实例,一个consumer实例可以允许同时分到不同的queue。

通过增加consumer实例去分摊queue的消费,可以起到水平扩展的消费能力的作用。而有实例下线的时候,会重新触发负载均衡,这时候原来分配到的queue将分配到其他实例上继续消费。

但是如果consumer实例的数量比message queue的总数量还多的话,多出来的consumer实例将无法分到queue,也就无法消费到消息,也就无法起到分摊负载的作用了。所以需要控制让queue的总数量大于等于consumer的数量。

广播模式 由于广播模式下要求一条消息需要投递到一个消费组下面所有的消费者实例,所以也就没有消息被分摊消费的说法。

在实现上,其中一个不同就是在consumer分配queue的时候,所有consumer都分到所有的queue。

消息重试 顺序消息的重试 对于顺序消息,当消费者消费消息失败后,消息队列 RocketMQ 会自动不断进行消息重试(每次间隔时间为 1 秒),这时,应用会出现消息消费被阻塞的情况。因此,在使用顺序消息时,务必保证应用能够及时监控并处理消费失败的情况,避免阻塞现象的发生。

无序消息的重试 对于无序消息(普通、定时、延时、事务消息),当消费者消费消息失败时,您可以通过设置返回状态达到消息重试的结果。

无序消息的重试只针对集群消费方式生效;广播方式不提供失败重试特性,即消费失败后,失败消息不再重试,继续消费新的消息。

重试次数 消息队列 RocketMQ 默认允许每条消息最多重试 16 次,每次重试的间隔时间如下:

第几次重试

与上次重试的间隔时间

第几次重试

与上次重试的间隔时间

1

10 秒

9

7 分钟

2

30 秒

10

8 分钟

3

1 分钟

11

9 分钟

4

2 分钟

12

10 分钟

5

3 分钟

13

20 分钟

6

4 分钟

14

30 分钟

7

5 分钟

15

1 小时

8

6 分钟

16

2 小时

如果消息重试 16 次后仍然失败,消息将不再投递。如果严格按照上述重试时间间隔计算,某条消息在一直消费失败的前提下,将会在接下来的 4 小时 46 分钟之内进行 16 次重试,超过这个时间范围消息将不再重试投递。

注意: 一条消息无论重试多少次,这些重试消息的 Message ID 不会改变。

配置方式 消费失败后,重试配置方式

集群消费方式下,消息消费失败后期望消息重试,需要在消息监听器接口的实现中明确进行配置(三种方式任选一种):

返回 Action.ReconsumeLater (推荐)

返回 Null

抛出异常

1 2 3 4 5 6 7 8 9 10 11 12 13 public class MessageListenerImpl implements MessageListener @Override public Action consume (Message message, ConsumeContext context) doConsumeMessage(message); return Action.ReconsumeLater; return null ; throw new RuntimeException("Consumer Message exceotion" ); } }

消费失败后,不重试配置方式

集群消费方式下,消息失败后期望消息不重试,需要捕获消费逻辑中可能抛出的异常,最终返回 Action.CommitMessage,此后这条消息将不会再重试。

1 2 3 4 5 6 7 8 9 10 11 12 13 public class MessageListenerImpl implements MessageListener @Override public Action consume (Message message, ConsumeContext context) try { doConsumeMessage(message); } catch (Throwable e) { return Action.CommitMessage; } return Action.CommitMessage; } }

自定义消息最大重试次数

消息队列 RocketMQ 允许 Consumer 启动的时候设置最大重试次数,重试时间间隔将按照如下策略:

最大重试次数小于等于 16 次,则重试时间间隔同上表描述。

最大重试次数大于 16 次,超过 16 次的重试时间间隔均为每次 2 小时。

1 2 3 4 Properties properties = new Properties(); properties.put(PropertyKeyConst.MaxReconsumeTimes,"20" ); Consumer consumer =ONSFactory.createConsumer(properties);

注意:

消息最大重试次数的设置对相同 Group ID 下的所有 Consumer 实例有效。

如果只对相同 Group ID 下两个 Consumer 实例中的其中一个设置了 MaxReconsumeTimes,那么该配置对两个 Consumer 实例均生效。

配置采用覆盖的方式生效,即最后启动的 Consumer 实例会覆盖之前的启动实例的配置

获取消息重试次数

消费者收到消息后,可按照如下方式获取消息的重试次数:

1 2 3 4 5 6 7 8 public class MessageListenerImpl implements MessageListener @Override public Action consume (Message message, ConsumeContext context) System.out.println(message.getReconsumeTimes()); return Action.CommitMessage; } }

死信队列 当一条消息初次消费失败,消息队列 RocketMQ 会自动进行消息重试;达到最大重试次数后,若消费依然失败,则表明消费者在正常情况下无法正确地消费该消息,此时,消息队列 RocketMQ 不会立刻将消息丢弃,而是将其发送到该消费者对应的特殊队列中。

在消息队列 RocketMQ 中,这种正常情况下无法被消费的消息称为死信消息(Dead-Letter Message),存储死信消息的特殊队列称为死信队列(Dead-Letter Queue)。

死信特性 死信消息具有以下特性

不会再被消费者正常消费。

有效期与正常消息相同,均为 3 天,3 天后会被自动删除。因此,请在死信消息产生后的 3 天内及时处理。

死信队列具有以下特性:

一个死信队列对应一个 Group ID, 而不是对应单个消费者实例。

如果一个 Group ID 未产生死信消息,消息队列 RocketMQ 不会为其创建相应的死信队列。

一个死信队列包含了对应 Group ID 产生的所有死信消息,不论该消息属于哪个 Topic。

查看死信信息

在控制台查询出现死信队列的主题信息

在消息界面根据主题查询死信消息

选择重新发送消息

一条消息进入死信队列,意味着某些因素导致消费者无法正常消费该消息,因此,通常需要您对其进行特殊处理。排查可疑因素并解决问题后,可以在消息队列 RocketMQ 控制台重新发送该消息,让消费者重新消费一次。

消费幂等 消息队列 RocketMQ 消费者在接收到消息以后,有必要根据业务上的唯一 Key 对消息做幂等处理的必要性。

消费幂等的必要性 在互联网应用中,尤其在网络不稳定的情况下,消息队列 RocketMQ 的消息有可能会出现重复,这个重复简单可以概括为以下情况:

发送时消息重复

当一条消息已被成功发送到服务端并完成持久化,此时出现了网络闪断或者客户端宕机,导致服务端对客户端应答失败。 如果此时生产者意识到消息发送失败并尝试再次发送消息,消费者后续会收到两条内容相同并且 Message ID 也相同的消息。

投递时消息重复

消息消费的场景下,消息已投递到消费者并完成业务处理,当客户端给服务端反馈应答的时候网络闪断。 为了保证消息至少被消费一次,消息队列 RocketMQ 的服务端将在网络恢复后再次尝试投递之前已被处理过的消息,消费者后续会收到两条内容相同并且 Message ID 也相同的消息。

负载均衡时消息重复(包括但不限于网络抖动、Broker 重启以及订阅方应用重启)

当消息队列 RocketMQ 的 Broker 或客户端重启、扩容或缩容时,会触发 Rebalance,此时消费者可能会收到重复消息。

处理方式 因为 Message ID 有可能出现冲突(重复)的情况,所以真正安全的幂等处理,不建议以 Message ID 作为处理依据。 最好的方式是以业务唯一标识作为幂等处理的关键依据,而业务的唯一标识可以通过消息 Key 进行设置:

1 2 3 Message message = new Message(); message.setKey("ORDERID_100" ); SendResult sendResult = producer.send(message);

订阅方收到消息时可以根据消息的 Key 进行幂等处理:

1 2 3 4 5 6 consumer.subscribe("ons_test" , "*" , new MessageListener() { public Action consume (Message message, ConsumeContext context) String key = message.getKey() } });

源码分析 环境搭建 依赖工具

JDK :1.8+

Maven

IntelliJ IDEA

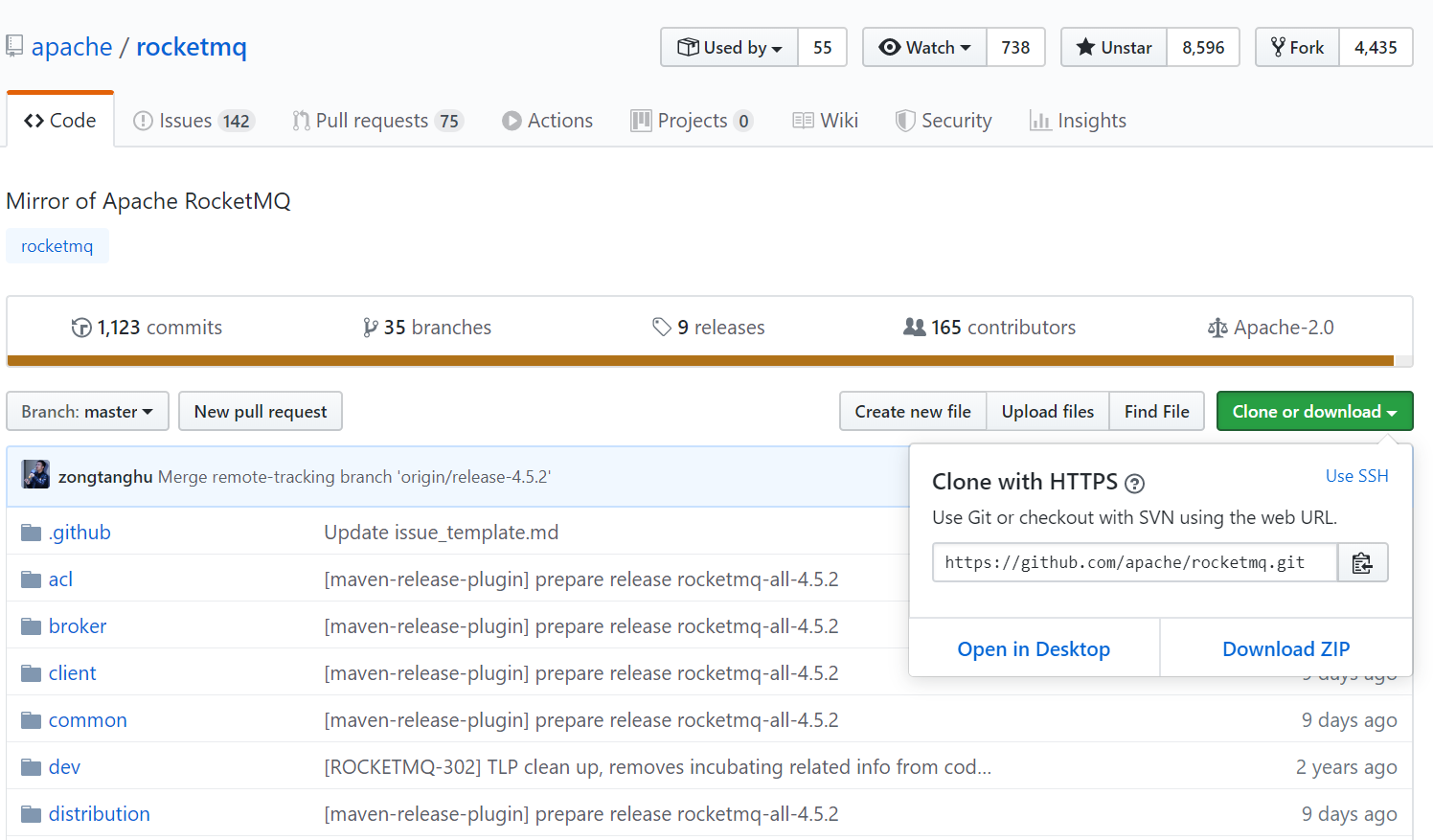

源码拉取 从官方仓库 https://github.com/apache/rocketmq clone或者download源码。

源码目录结构:

broker: broker 模块(broke 启动进程)

client :消息客户端,包含消息生产者、消息消费者相关类

common :公共包

dev :开发者信息(非源代码)

distribution :部署实例文件夹(非源代码)

example: RocketMQ 例代码

filter :消息过滤相关基础类

filtersrv:消息过滤服务器实现相关类(Filter启动进程)

logappender:日志实现相关类

namesrv:NameServer实现相关类(NameServer启动进程)

openmessageing:消息开放标准

remoting:远程通信模块,给予Netty

srcutil:服务工具类

store:消息存储实现相关类

style:checkstyle相关实现

test:测试相关类

tools:工具类,监控命令相关实现类

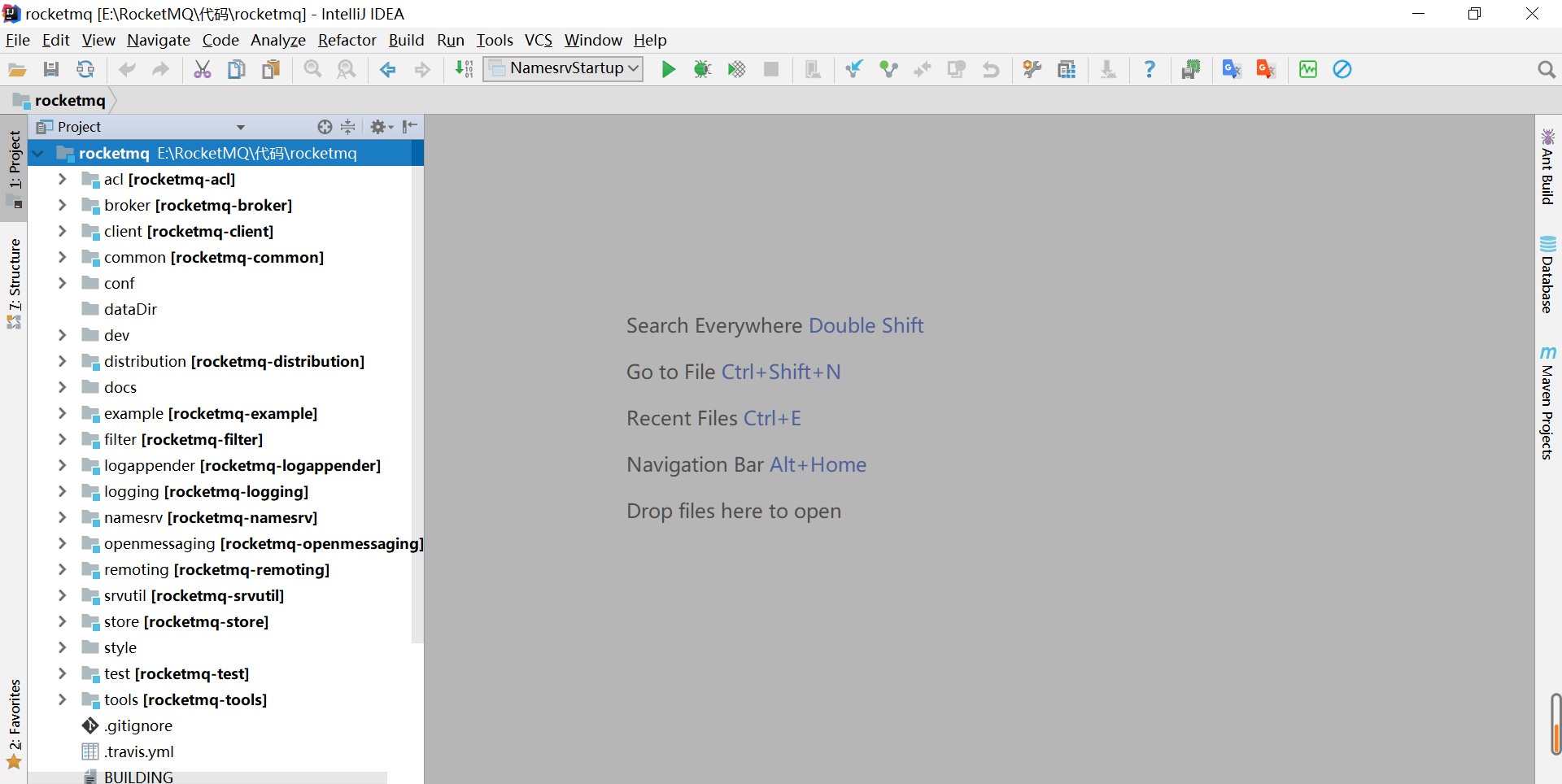

导入IDEA

执行安装

1 clean install -Dmaven.test.skip=true

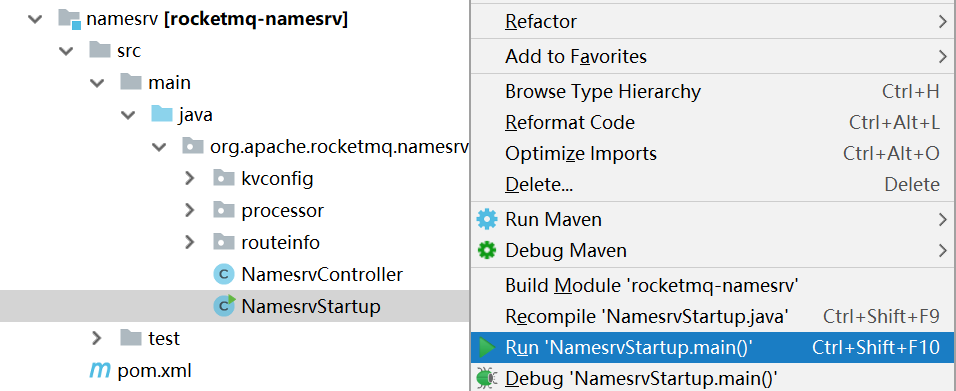

调试 创建conf配置文件夹,从distribution拷贝broker.conf和logback_broker.xml和logback_namesrv.xml

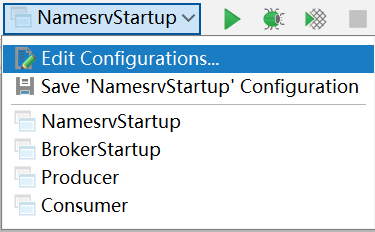

启动NameServer

展开namesrv模块,右键NamesrvStartup.java

1 The Name Server boot success. serializeType=JSON

启动Broker

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 brokerClusterName = DefaultCluster brokerName = broker-a brokerId = 0 # namesrvAddr地址 namesrvAddr=127.0.0.1:9876 deleteWhen = 04 fileReservedTime = 48 brokerRole = ASYNC_MASTER flushDiskType = ASYNC_FLUSH autoCreateTopicEnable=true # 存储路径 storePathRootDir=E:\\RocketMQ\\data\\rocketmq\\dataDir # commitLog路径 storePathCommitLog=E:\\RocketMQ\\data\\rocketmq\\dataDir\\commitlog # 消息队列存储路径 storePathConsumeQueue=E:\\RocketMQ\\data\\rocketmq\\dataDir\\consumequeue # 消息索引存储路径 storePathIndex=E:\\RocketMQ\\data\\rocketmq\\dataDir\\index # checkpoint文件路径 storeCheckpoint=E:\\RocketMQ\\data\\rocketmq\\dataDir\\checkpoint # abort文件存储路径 abortFile=E:\\RocketMQ\\data\\rocketmq\\dataDir\\abort

创建数据文件夹dataDir

启动BrokerStartup,配置broker.conf和ROCKETMQ_HOME

发送消息

进入example模块的org.apache.rocketmq.example.quickstart

指定Namesrv地址

1 2 DefaultMQProducer producer = new DefaultMQProducer("please_rename_unique_group_name" ); producer.setNamesrvAddr("127.0.0.1:9876" );

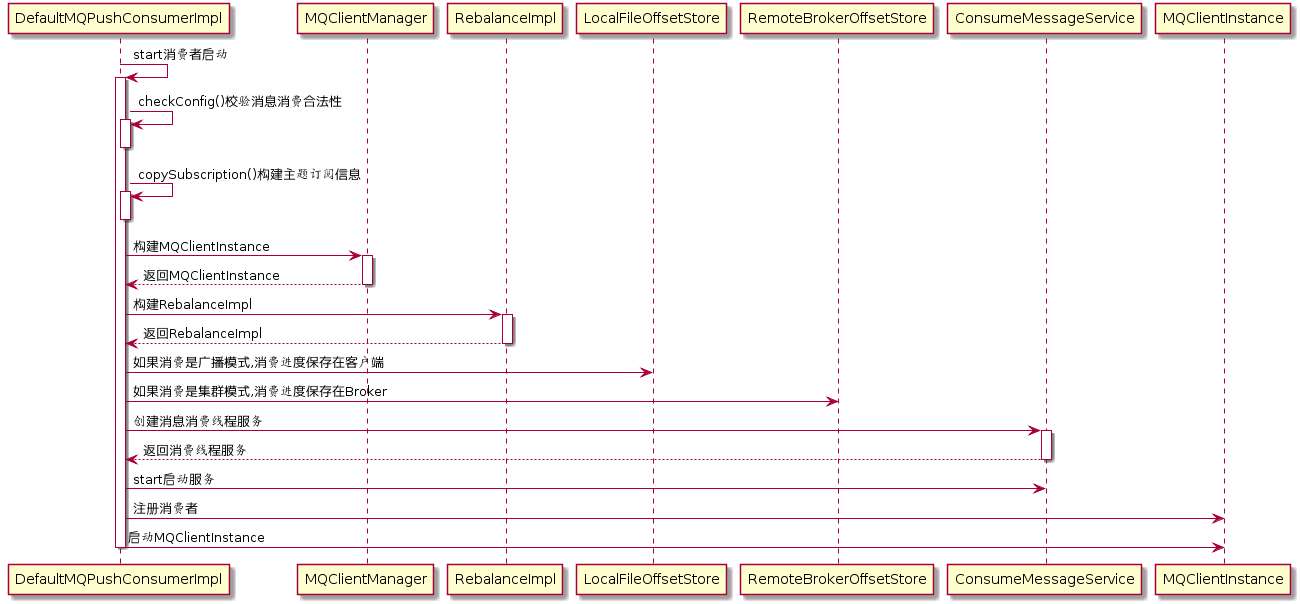

消费消息

进入example模块的org.apache.rocketmq.example.quickstart

指定Namesrv地址

1 2 DefaultMQPushConsumer consumer = new DefaultMQPushConsumer("please_rename_unique_group_name_4" ); consumer.setNamesrvAddr("127.0.0.1:9876" );

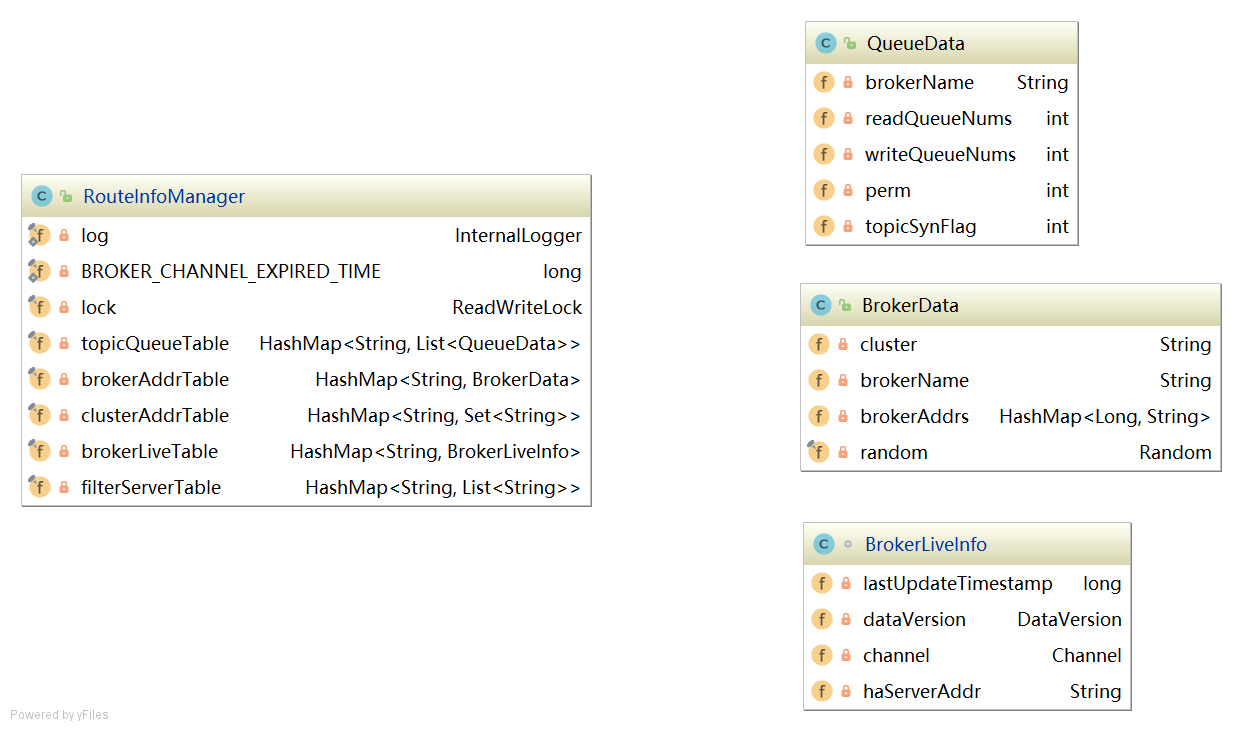

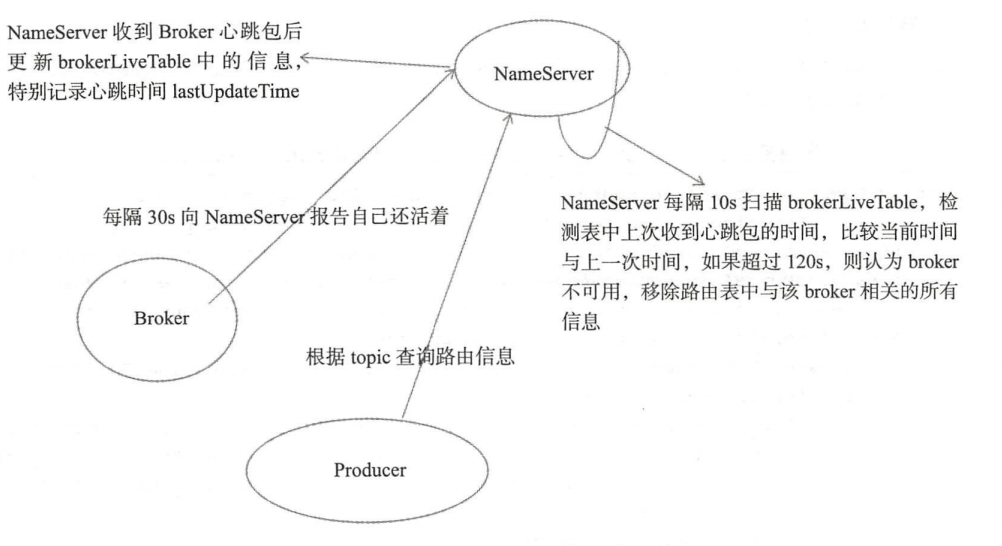

NameServer 架构设计 消息中间件的设计思路一般是基于主题订阅发布的机制,消息生产者(Producer)发送某一个主题到消息服务器,消息服务器负责将消息持久化存储,消息消费者(Consumer)订阅该兴趣的主题,消息服务器根据订阅信息(路由信息)将消息推送到消费者(Push模式)或者消费者主动向消息服务器拉去(Pull模式),从而实现消息生产者与消息消费者解耦。为了避免消息服务器的单点故障导致的整个系统瘫痪,通常会部署多台消息服务器共同承担消息的存储。那消息生产者如何知道消息要发送到哪台消息服务器呢?如果某一台消息服务器宕机了,那么消息生产者如何在不重启服务情况下感知呢?

NameServer就是为了解决以上问题设计的。

Broker消息服务器在启动的时向所有NameServer注册,消息生产者(Producer)在发送消息时之前先从NameServer获取Broker服务器地址列表,然后根据负载均衡算法从列表中选择一台服务器进行发送。NameServer与每台Broker保持长连接,并间隔30S检测Broker是否存活,如果检测到Broker宕机,则从路由注册表中删除。但是路由变化不会马上通知消息生产者。这样设计的目的是为了降低NameServer实现的复杂度,在消息发送端提供容错机制保证消息发送的可用性。

NameServer本身的高可用是通过部署多台NameServer来实现,但彼此之间不通讯,也就是NameServer服务器之间在某一个时刻的数据并不完全相同,但这对消息发送并不会造成任何影响,这也是NameServer设计的一个亮点,总之,RocketMQ设计追求简单高效。

启动流程

启动类:org.apache.rocketmq.namesrv.NamesrvStartup

步骤一 解析配置文件,填充NameServerConfig、NettyServerConfig属性值,并创建NamesrvController

代码:NamesrvController#createNamesrvController

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 final NamesrvConfig namesrvConfig = new NamesrvConfig();final NettyServerConfig nettyServerConfig = new NettyServerConfig();nettyServerConfig.setListenPort(9876 ); if (commandLine.hasOption('c' )) { String file = commandLine.getOptionValue('c' ); if (file != null ) { InputStream in = new BufferedInputStream(new FileInputStream(file)); properties = new Properties(); properties.load(in); MixAll.properties2Object(properties, namesrvConfig); MixAll.properties2Object(properties, nettyServerConfig); namesrvConfig.setConfigStorePath(file); System.out.printf("load config properties file OK, %s%n" , file); in.close(); } } if (commandLine.hasOption('p' )) { InternalLogger console = InternalLoggerFactory.getLogger(LoggerName.NAMESRV_CONSOLE_NAME); MixAll.printObjectProperties(console, namesrvConfig); MixAll.printObjectProperties(console, nettyServerConfig); System.exit(0 ); } MixAll.properties2Object(ServerUtil.commandLine2Properties(commandLine), namesrvConfig); final NamesrvController controller = new NamesrvController(namesrvConfig, nettyServerConfig);

NamesrvConfig属性

1 2 3 4 5 6 private String rocketmqHome = System.getProperty(MixAll.ROCKETMQ_HOME_PROPERTY, System.getenv(MixAll.ROCKETMQ_HOME_ENV));private String kvConfigPath = System.getProperty("user.home" ) + File.separator + "namesrv" + File.separator + "kvConfig.json" ;private String configStorePath = System.getProperty("user.home" ) + File.separator + "namesrv" + File.separator + "namesrv.properties" ;private String productEnvName = "center" ;private boolean clusterTest = false ;private boolean orderMessageEnable = false ;

rocketmqHome: rocketmq主目录

kvConfig: NameServer存储KV配置属性的持久化路径

configStorePath: nameServer默认配置文件路径

orderMessageEnable: 是否支持顺序消息

NettyServerConfig属性

1 2 3 4 5 6 7 8 9 10 11 private int listenPort = 8888 ;private int serverWorkerThreads = 8 ;private int serverCallbackExecutorThreads = 0 ;private int serverSelectorThreads = 3 ;private int serverOnewaySemaphoreValue = 256 ;private int serverAsyncSemaphoreValue = 64 ;private int serverChannelMaxIdleTimeSeconds = 120 ;private int serverSocketSndBufSize = NettySystemConfig.socketSndbufSize;private int serverSocketRcvBufSize = NettySystemConfig.socketRcvbufSize;private boolean serverPooledByteBufAllocatorEnable = true ;private boolean useEpollNativeSelector = false ;

listenPort: NameServer监听端口,该值默认会被初始化为9876serverWorkerThreads: Netty业务线程池线程个数serverCallbackExecutorThreads: Netty public任务线程池线程个数,Netty网络设计,根据业务类型会创建不同的线程池,比如处理消息发送、消息消费、心跳检测等。如果该业务类型未注册线程池,则由public线程池执行。serverSelectorThreads: IO线程池个数,主要是NameServer、Broker端解析请求、返回相应的线程个数,这类线程主要是处理网路请求的,解析请求包,然后转发到各个业务线程池完成具体的操作,然后将结果返回给调用方;serverOnewaySemaphoreValue: send oneway消息请求并发读(Broker端参数);serverAsyncSemaphoreValue: 异步消息发送最大并发度;serverChannelMaxIdleTimeSeconds : 网络连接最大的空闲时间,默认120s。serverSocketSndBufSize: 网络socket发送缓冲区大小。serverSocketRcvBufSize: 网络接收端缓存区大小。serverPooledByteBufAllocatorEnable: ByteBuffer是否开启缓存;useEpollNativeSelector: 是否启用Epoll IO模型。

步骤二 根据启动属性创建NamesrvController实例,并初始化该实例。NameServerController实例为NameServer核心控制器

代码:NamesrvController#initialize

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 public boolean initialize () this .kvConfigManager.load(); this .remotingServer = new NettyRemotingServer(this .nettyServerConfig, this .brokerHousekeepingService); this .remotingExecutor = Executors.newFixedThreadPool(nettyServerConfig.getServerWorkerThreads(), new ThreadFactoryImpl("RemotingExecutorThread_" )); this .registerProcessor(); this .scheduledExecutorService.scheduleAtFixedRate(new Runnable() { @Override public void run () NamesrvController.this .routeInfoManager.scanNotActiveBroker(); } }, 5 , 10 , TimeUnit.SECONDS); this .scheduledExecutorService.scheduleAtFixedRate(new Runnable() { @Override public void run () NamesrvController.this .kvConfigManager.printAllPeriodically(); } }, 1 , 10 , TimeUnit.MINUTES); return true ; }

步骤三 在JVM进程关闭之前,先将线程池关闭,及时释放资源

代码:NamesrvStartup#start

1 2 3 4 5 6 7 8 9 Runtime.getRuntime().addShutdownHook(new ShutdownHookThread(log, new Callable<Void>() { @Override public Void call () throws Exception controller.shutdown(); return null ; } }));

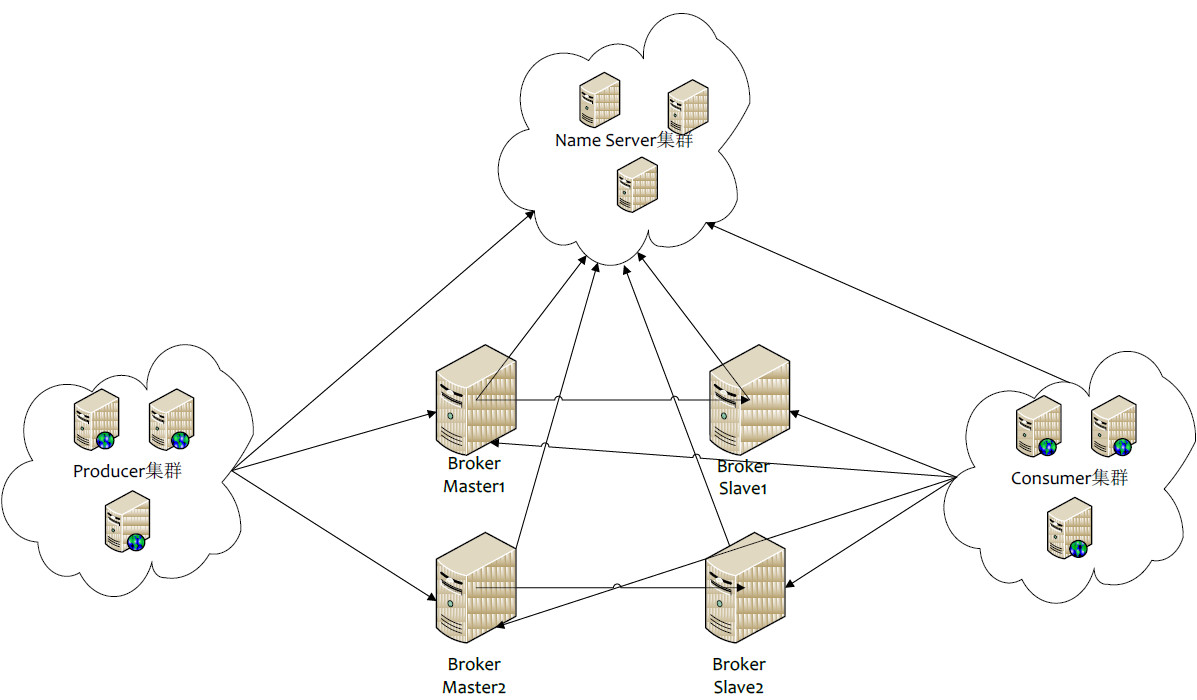

路由管理 NameServer的主要作用是为消息的生产者和消息消费者提供关于主题Topic的路由信息,那么NameServer需要存储路由的基础信息,还要管理Broker节点,包括路由注册、路由删除等。

路由元信息 代码:RouteInfoManager

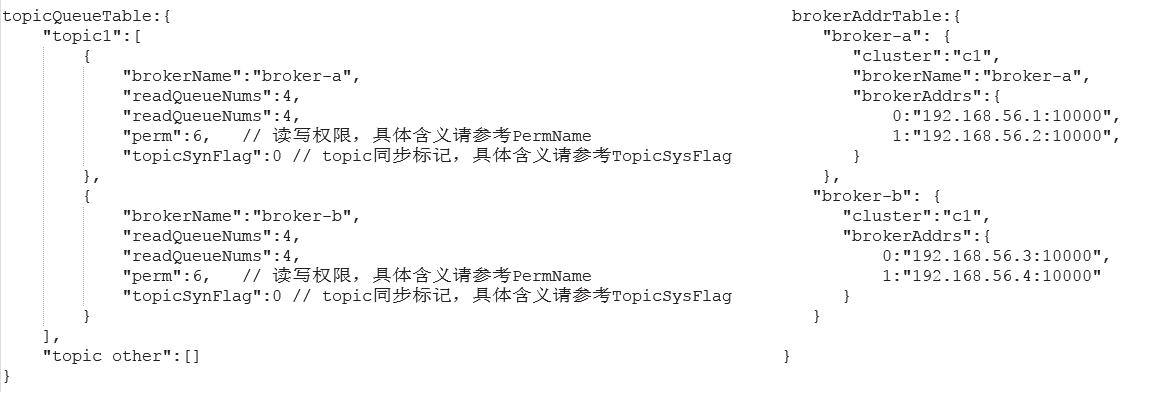

1 2 3 4 5 private final HashMap<String, List<QueueData>> topicQueueTable;private final HashMap<String, BrokerData> brokerAddrTable;private final HashMap<String, Set<String>> clusterAddrTable;private final HashMap<String, BrokerLiveInfo> brokerLiveTable;private final HashMap<String, List<String>> filterServerTable;

topicQueueTable: Topic消息队列路由信息,消息发送时根据路由表进行负载均衡

brokerAddrTable: Broker基础信息,包括brokerName、所属集群名称、主备Broker地址

clusterAddrTable: Broker集群信息,存储集群中所有Broker名称

brokerLiveTable: Broker状态信息,NameServer每次收到心跳包是会替换该信息

filterServerTable: Broker上的FilterServer列表,用于类模式消息过滤。

RocketMQ基于定于发布机制,一个Topic拥有多个消息队列,一个Broker为每一个主题创建4个读队列和4个写队列。多个Broker组成一个集群,集群由相同的多台Broker组成Master-Slave架构,brokerId为0代表Master,大于0为Slave。BrokerLiveInfo中的lastUpdateTimestamp存储上次收到Broker心跳包的时间。

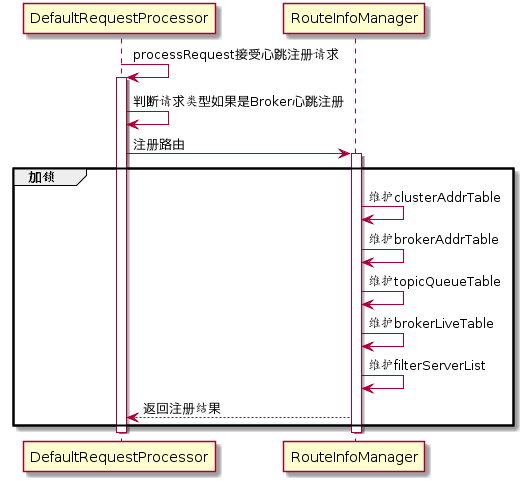

路由注册 发送心跳包

RocketMQ路由注册是通过Broker与NameServer的心跳功能实现的。Broker启动时向集群中所有的NameServer发送心跳信息,每隔30s向集群中所有NameServer发送心跳包,NameServer收到心跳包时会更新brokerLiveTable缓存中BrokerLiveInfo的lastUpdataTimeStamp信息,然后NameServer每隔10s扫描brokerLiveTable,如果连续120S没有收到心跳包,NameServer将移除Broker的路由信息同时关闭Socket连接。

代码:BrokerController#start

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 this .registerBrokerAll(true , false , true );this .scheduledExecutorService.scheduleAtFixedRate(new Runnable() { @Override public void run () try { BrokerController.this .registerBrokerAll(true , false , brokerConfig.isForceRegister()); } catch (Throwable e) { log.error("registerBrokerAll Exception" , e); } } }, 1000 * 10 , Math.max(10000 , Math.min(brokerConfig.getRegisterNameServerPeriod(), 60000 )), TimeUnit.MILLISECONDS);

代码:BrokerOuterAPI#registerBrokerAll

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 List<String> nameServerAddressList = this .remotingClient.getNameServerAddressList(); if (nameServerAddressList != null && nameServerAddressList.size() > 0 ) { final RegisterBrokerRequestHeader requestHeader = new RegisterBrokerRequestHeader(); requestHeader.setBrokerAddr(brokerAddr); requestHeader.setBrokerId(brokerId); requestHeader.setBrokerName(brokerName); requestHeader.setClusterName(clusterName); requestHeader.setHaServerAddr(haServerAddr); requestHeader.setCompressed(compressed); RegisterBrokerBody requestBody = new RegisterBrokerBody(); requestBody.setTopicConfigSerializeWrapper(topicConfigWrapper); requestBody.setFilterServerList(filterServerList); final byte [] body = requestBody.encode(compressed); final int bodyCrc32 = UtilAll.crc32(body); requestHeader.setBodyCrc32(bodyCrc32); final CountDownLatch countDownLatch = new CountDownLatch(nameServerAddressList.size()); for (final String namesrvAddr : nameServerAddressList) { brokerOuterExecutor.execute(new Runnable() { @Override public void run () try { RegisterBrokerResult result = registerBroker(namesrvAddr,oneway, timeoutMills,requestHeader,body); if (result != null ) { registerBrokerResultList.add(result); } log.info("register broker[{}]to name server {} OK" , brokerId, namesrvAddr); } catch (Exception e) { log.warn("registerBroker Exception, {}" , namesrvAddr, e); } finally { countDownLatch.countDown(); } } }); } try { countDownLatch.await(timeoutMills, TimeUnit.MILLISECONDS); } catch (InterruptedException e) { } }

代码:BrokerOutAPI#registerBroker

1 2 3 4 5 6 7 8 9 if (oneway) { try { this .remotingClient.invokeOneway(namesrvAddr, request, timeoutMills); } catch (RemotingTooMuchRequestException e) { } return null ; } RemotingCommand response = this .remotingClient.invokeSync(namesrvAddr, request, timeoutMills);

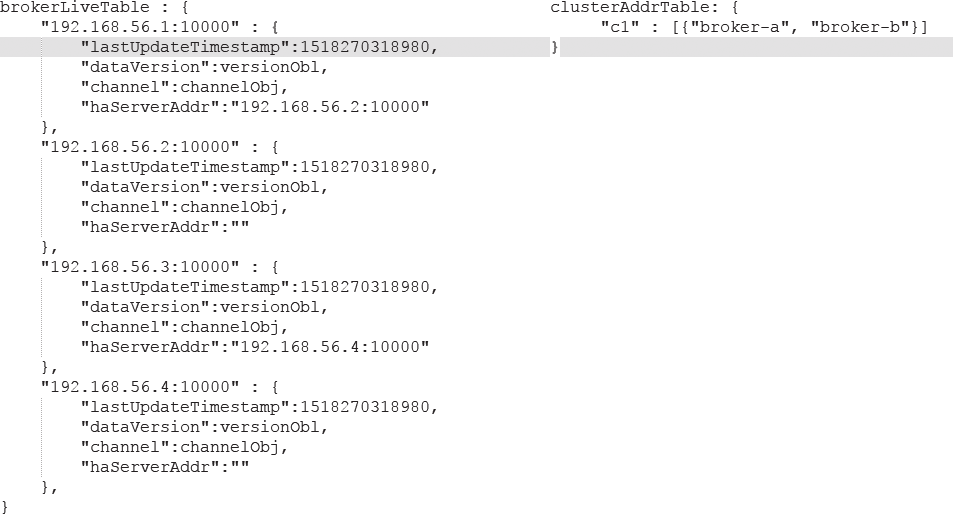

处理心跳包

org.apache.rocketmq.namesrv.processor.DefaultRequestProcessor网路处理类解析请求类型,如果请求类型是为REGISTER_BROKER RouteInfoManager#regiesterBroker

代码:DefaultRequestProcessor#processRequest

1 2 3 4 5 6 7 8 9 case RequestCode.REGISTER_BROKER: Version brokerVersion = MQVersion.value2Version(request.getVersion()); if (brokerVersion.ordinal() >= MQVersion.Version.V3_0_11.ordinal()) { return this .registerBrokerWithFilterServer(ctx, request); } else { return this .registerBroker(ctx, request); }

代码:DefaultRequestProcessor#registerBroker

1 2 3 4 5 6 7 8 9 10 RegisterBrokerResult result = this .namesrvController.getRouteInfoManager().registerBroker( requestHeader.getClusterName(), requestHeader.getBrokerAddr(), requestHeader.getBrokerName(), requestHeader.getBrokerId(), requestHeader.getHaServerAddr(), topicConfigWrapper, null , ctx.channel() );

代码:RouteInfoManager#registerBroker

维护路由信息

1 2 3 4 5 6 7 8 9 this .lock.writeLock().lockInterruptibly();Set<String> brokerNames = this .clusterAddrTable.get(clusterName); if (null == brokerNames) { brokerNames = new HashSet<String>(); this .clusterAddrTable.put(clusterName, brokerNames); } brokerNames.add(brokerName);

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 BrokerData brokerData = this .brokerAddrTable.get(brokerName); if (null == brokerData) { registerFirst = true ; brokerData = new BrokerData(clusterName, brokerName, new HashMap<Long, String>()); this .brokerAddrTable.put(brokerName, brokerData); } Map<Long, String> brokerAddrsMap = brokerData.getBrokerAddrs(); Iterator<Entry<Long, String>> it = brokerAddrsMap.entrySet().iterator(); while (it.hasNext()) { Entry<Long, String> item = it.next(); if (null != brokerAddr && brokerAddr.equals(item.getValue()) && brokerId != item.getKey()) { it.remove(); } } String oldAddr = brokerData.getBrokerAddrs().put(brokerId, brokerAddr); registerFirst = registerFirst || (null == oldAddr);

1 2 3 4 5 6 7 8 9 10 11 12 if (null != topicConfigWrapper && MixAll.MASTER_ID == brokerId) { if (this .isBrokerTopicConfigChanged(brokerAddr, topicConfigWrapper.getDataVersion()) || registerFirst) { ConcurrentMap<String, TopicConfig> tcTable = topicConfigWrapper.getTopicConfigTable(); if (tcTable != null ) { for (Map.Entry<String, TopicConfig> entry : tcTable.entrySet()) { this .createAndUpdateQueueData(brokerName, entry.getValue()); } } } }

代码:RouteInfoManager#createAndUpdateQueueData

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 private void createAndUpdateQueueData (final String brokerName, final TopicConfig topicConfig) QueueData queueData = new QueueData(); queueData.setBrokerName(brokerName); queueData.setWriteQueueNums(topicConfig.getWriteQueueNums()); queueData.setReadQueueNums(topicConfig.getReadQueueNums()); queueData.setPerm(topicConfig.getPerm()); queueData.setTopicSynFlag(topicConfig.getTopicSysFlag()); List<QueueData> queueDataList = this .topicQueueTable.get(topicConfig.getTopicName()); if (null == queueDataList) { queueDataList = new LinkedList<QueueData>(); queueDataList.add(queueData); this .topicQueueTable.put(topicConfig.getTopicName(), queueDataList); log.info("new topic registered, {} {}" , topicConfig.getTopicName(), queueData); } else { boolean addNewOne = true ; Iterator<QueueData> it = queueDataList.iterator(); while (it.hasNext()) { QueueData qd = it.next(); if (qd.getBrokerName().equals(brokerName)) { if (qd.equals(queueData)) { addNewOne = false ; } else { log.info("topic changed, {} OLD: {} NEW: {}" , topicConfig.getTopicName(), qd, queueData); it.remove(); } } } if (addNewOne) { queueDataList.add(queueData); } } }

1 2 3 4 5 6 BrokerLiveInfo prevBrokerLiveInfo = this .brokerLiveTable.put(brokerAddr,new BrokerLiveInfo( System.currentTimeMillis(), topicConfigWrapper.getDataVersion(), channel, haServerAddr));

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 if (filterServerList != null ) { if (filterServerList.isEmpty()) { this .filterServerTable.remove(brokerAddr); } else { this .filterServerTable.put(brokerAddr, filterServerList); } } if (MixAll.MASTER_ID != brokerId) { String masterAddr = brokerData.getBrokerAddrs().get(MixAll.MASTER_ID); if (masterAddr != null ) { BrokerLiveInfo brokerLiveInfo = this .brokerLiveTable.get(masterAddr); if (brokerLiveInfo != null ) { result.setHaServerAddr(brokerLiveInfo.getHaServerAddr()); result.setMasterAddr(masterAddr); } } }

路由删除 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 **RocketMQ有两个触发点来删除路由信息**: * NameServer定期扫描brokerLiveTable检测上次心跳包与当前系统的时间差,如果时间超过120s,则需要移除broker。 * Broker在正常关闭的情况下,会执行unregisterBroker指令 这两种方式路由删除的方法都是一样的,就是从相关路由表中删除与该broker相关的信息。  ***代码:NamesrvController#initialize*** ```java //每隔10s扫描一次为活跃Broker this.scheduledExecutorService.scheduleAtFixedRate(new Runnable() { @Override public void run() { NamesrvController.this.routeInfoManager.scanNotActiveBroker(); } }, 5, 10, TimeUnit.SECONDS);

代码:RouteInfoManager#scanNotActiveBroker

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 public void scanNotActiveBroker () Iterator<Entry<String, BrokerLiveInfo>> it = this .brokerLiveTable.entrySet().iterator(); while (it.hasNext()) { Entry<String, BrokerLiveInfo> next = it.next(); long last = next.getValue().getLastUpdateTimestamp(); if ((last + BROKER_CHANNEL_EXPIRED_TIME) < System.currentTimeMillis()) { RemotingUtil.closeChannel(next.getValue().getChannel()); it.remove(); this .onChannelDestroy(next.getKey(), next.getValue().getChannel()); } } }

代码:RouteInfoManager#onChannelDestroy

1 2 3 4 this .lock.writeLock().lockInterruptibly();this .brokerLiveTable.remove(brokerAddrFound);this .filterServerTable.remove(brokerAddrFound);

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 String brokerNameFound = null ; boolean removeBrokerName = false ;Iterator<Entry<String, BrokerData>> itBrokerAddrTable =this .brokerAddrTable.entrySet().iterator(); while (itBrokerAddrTable.hasNext() && (null == brokerNameFound)) { BrokerData brokerData = itBrokerAddrTable.next().getValue(); Iterator<Entry<Long, String>> it = brokerData.getBrokerAddrs().entrySet().iterator(); while (it.hasNext()) { Entry<Long, String> entry = it.next(); Long brokerId = entry.getKey(); String brokerAddr = entry.getValue(); if (brokerAddr.equals(brokerAddrFound)) { brokerNameFound = brokerData.getBrokerName(); it.remove(); log.info("remove brokerAddr[{}, {}] from brokerAddrTable, because channel destroyed" , brokerId, brokerAddr); break ; } } if (brokerData.getBrokerAddrs().isEmpty()) { removeBrokerName = true ; itBrokerAddrTable.remove(); log.info("remove brokerName[{}] from brokerAddrTable, because channel destroyed" , brokerData.getBrokerName()); } }

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 if (brokerNameFound != null && removeBrokerName) { Iterator<Entry<String, Set<String>>> it = this .clusterAddrTable.entrySet().iterator(); while (it.hasNext()) { Entry<String, Set<String>> entry = it.next(); String clusterName = entry.getKey(); Set<String> brokerNames = entry.getValue(); boolean removed = brokerNames.remove(brokerNameFound); if (removed) { log.info("remove brokerName[{}], clusterName[{}] from clusterAddrTable, because channel destroyed" , brokerNameFound, clusterName); if (brokerNames.isEmpty()) { log.info("remove the clusterName[{}] from clusterAddrTable, because channel destroyed and no broker in this cluster" , clusterName); it.remove(); } break ; } } }

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 if (removeBrokerName) { Iterator<Entry<String, List<QueueData>>> itTopicQueueTable = this .topicQueueTable.entrySet().iterator(); while (itTopicQueueTable.hasNext()) { Entry<String, List<QueueData>> entry = itTopicQueueTable.next(); String topic = entry.getKey(); List<QueueData> queueDataList = entry.getValue(); Iterator<QueueData> itQueueData = queueDataList.iterator(); while (itQueueData.hasNext()) { QueueData queueData = itQueueData.next(); if (queueData.getBrokerName().equals(brokerNameFound)) { itQueueData.remove(); log.info("remove topic[{} {}], from topicQueueTable, because channel destroyed" , topic, queueData); } } if (queueDataList.isEmpty()) { itTopicQueueTable.remove(); log.info("remove topic[{}] all queue, from topicQueueTable, because channel destroyed" , topic); } } }

1 2 3 4 finally { this .lock.writeLock().unlock(); }

路由发现 RocketMQ路由发现是非实时的,当Topic路由出现变化后,NameServer不会主动推送给客户端,而是由客户端定时拉取主题最新的路由。

代码:DefaultRequestProcessor#getRouteInfoByTopic

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 public RemotingCommand getRouteInfoByTopic (ChannelHandlerContext ctx, RemotingCommand request) throws RemotingCommandException final RemotingCommand response = RemotingCommand.createResponseCommand(null ); final GetRouteInfoRequestHeader requestHeader = (GetRouteInfoRequestHeader) request.decodeCommandCustomHeader(GetRouteInfoRequestHeader.class); TopicRouteData topicRouteData = this .namesrvController.getRouteInfoManager().pickupTopicRouteData(requestHeader.getTopic()); if (topicRouteData != null ) { if (this .namesrvController.getNamesrvConfig().isOrderMessageEnable()) { String orderTopicConf = this .namesrvController.getKvConfigManager().getKVConfig(NamesrvUtil.NAMESPACE_ORDER_TOPIC_CONFIG, requestHeader.getTopic()); topicRouteData.setOrderTopicConf(orderTopicConf); } byte [] content = topicRouteData.encode(); response.setBody(content); response.setCode(ResponseCode.SUCCESS); response.setRemark(null ); return response; } response.setCode(ResponseCode.TOPIC_NOT_EXIST); response.setRemark("No topic route info in name server for the topic: " + requestHeader.getTopic() + FAQUrl.suggestTodo(FAQUrl.APPLY_TOPIC_URL)); return response; }

小结

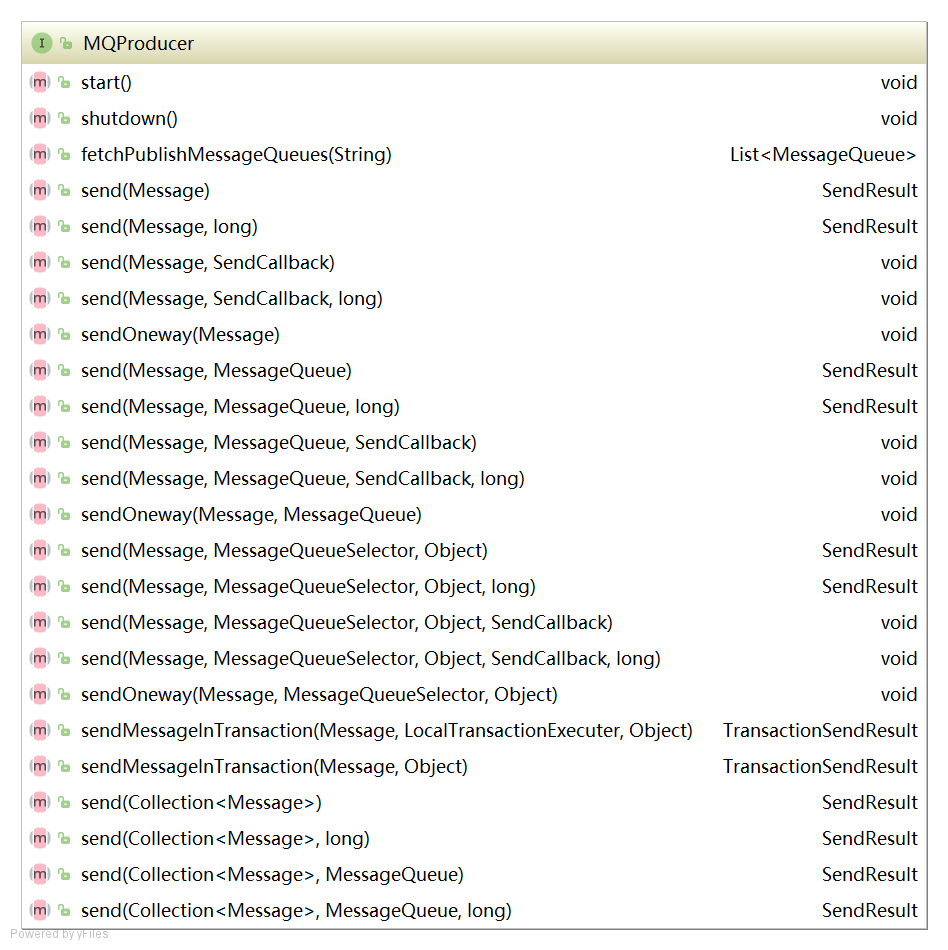

Producer 消息生产者的代码都在client模块中,相对于RocketMQ来讲,消息生产者就是客户端,也是消息的提供者。

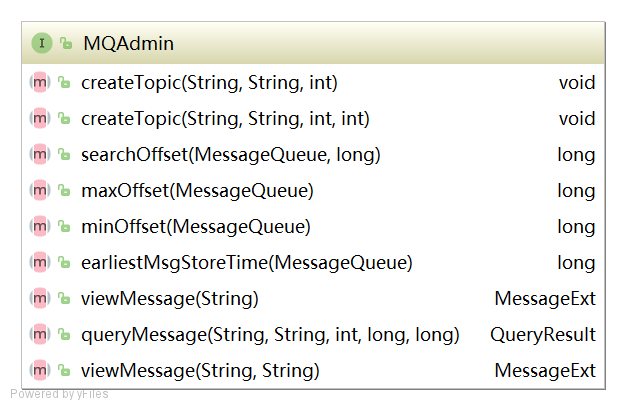

方法和属性 主要方法介绍

1 2 void createTopic (final String key, final String newTopic, final int queueNum) throws MQClientException

1 2 long searchOffset (final MessageQueue mq, final long timestamp)

1 2 long maxOffset (final MessageQueue mq) throws MQClientException

1 2 long minOffset (final MessageQueue mq)

1 2 3 MessageExt viewMessage (final String offsetMsgId) throws RemotingException, MQBrokerException, InterruptedException, MQClientException ;

1 2 3 QueryResult queryMessage (final String topic, final String key, final int maxNum, final long begin, final long end) throws MQClientException, InterruptedException

1 2 MessageExt viewMessage (String topic,String msgId) throws RemotingException, MQBrokerException, InterruptedException, MQClientException ;

1 2 void start () throws MQClientException

1 2 List<MessageQueue> fetchPublishMessageQueues (final String topic) throws MQClientException ;

1 2 3 SendResult send (final Message msg) throws MQClientException, RemotingException, MQBrokerException, InterruptedException ;

1 2 3 SendResult send (final Message msg, final long timeout) throws MQClientException, RemotingException, MQBrokerException, InterruptedException ;

1 2 3 void send (final Message msg, final SendCallback sendCallback) throws MQClientException, RemotingException, InterruptedException ;

1 2 3 void send (final Message msg, final SendCallback sendCallback, final long timeout) throws MQClientException, RemotingException, InterruptedException ;

1 2 3 void sendOneway (final Message msg) throws MQClientException, RemotingException, InterruptedException ;

1 2 3 SendResult send (final Message msg, final MessageQueue mq) throws MQClientException, RemotingException, MQBrokerException, InterruptedException ;

1 2 3 void send (final Message msg, final MessageQueue mq, final SendCallback sendCallback) throws MQClientException, RemotingException, InterruptedException ;

1 2 3 void sendOneway (final Message msg, final MessageQueue mq) throws MQClientException, RemotingException, InterruptedException ;

1 2 SendResult send (final Collection<Message> msgs) throws MQClientException, RemotingException, MQBrokerException,InterruptedException ;

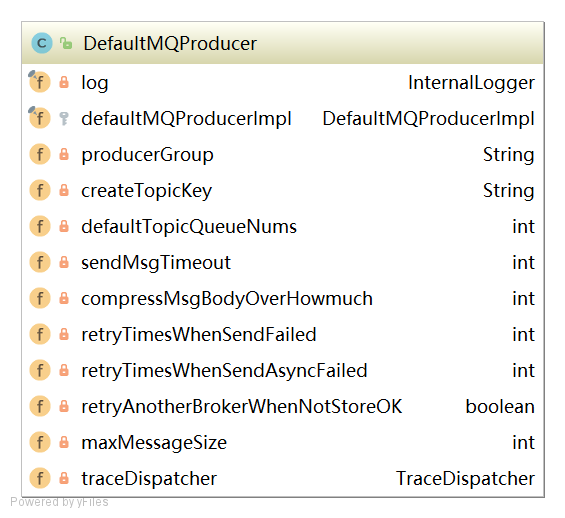

属性介绍

1 2 3 4 5 6 7 8 9 producerGroup:生产者所属组 createTopicKey:默认Topic defaultTopicQueueNums:默认主题在每一个Broker队列数量 sendMsgTimeout:发送消息默认超时时间,默认3 s compressMsgBodyOverHowmuch:消息体超过该值则启用压缩,默认4 k retryTimesWhenSendFailed:同步方式发送消息重试次数,默认为2 ,总共执行3 次 retryTimesWhenSendAsyncFailed:异步方法发送消息重试次数,默认为2 retryAnotherBrokerWhenNotStoreOK:消息重试时选择另外一个Broker时,是否不等待存储结果就返回,默认为false maxMessageSize:允许发送的最大消息长度,默认为4 M

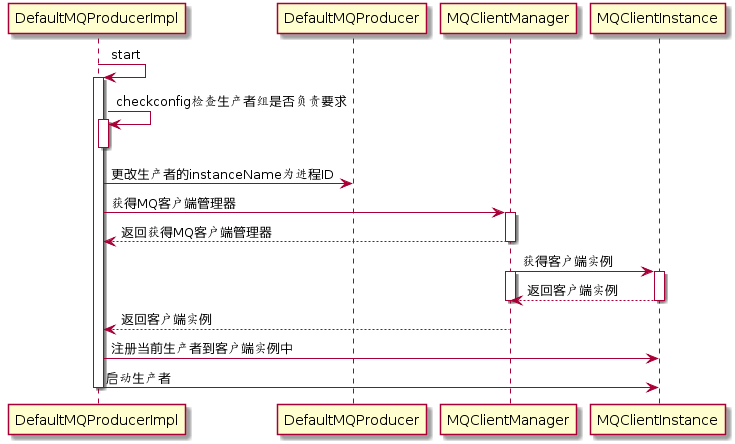

启动流程

代码:DefaultMQProducerImpl#start

1 2 3 4 5 6 7 8 this .checkConfig();if (!this .defaultMQProducer.getProducerGroup().equals(MixAll.CLIENT_INNER_PRODUCER_GROUP)) { this .defaultMQProducer.changeInstanceNameToPID(); } this .mQClientFactory = MQClientManager.getInstance().getAndCreateMQClientInstance(this .defaultMQProducer, rpcHook);

整个JVM中只存在一个MQClientManager实例,维护一个MQClientInstance缓存表

ConcurrentMap<String/ clientId /, MQClientInstance> factoryTable = new ConcurrentHashMap<String,MQClientInstance>();

同一个clientId只会创建一个MQClientInstance。

MQClientInstance封装了RocketMQ网络处理API,是消息生产者和消息消费者与NameServer、Broker打交道的网络通道

代码:MQClientManager#getAndCreateMQClientInstance

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 public MQClientInstance getAndCreateMQClientInstance (final ClientConfig clientConfig, RPCHook rpcHook) String clientId = clientConfig.buildMQClientId(); MQClientInstance instance = this .factoryTable.get(clientId); if (null == instance) { instance = new MQClientInstance(clientConfig.cloneClientConfig(), this .factoryIndexGenerator.getAndIncrement(), clientId, rpcHook); MQClientInstance prev = this .factoryTable.putIfAbsent(clientId, instance); if (prev != null ) { instance = prev; log.warn("Returned Previous MQClientInstance for clientId:[{}]" , clientId); } else { log.info("Created new MQClientInstance for clientId:[{}]" , clientId); } } return instance; }

代码:DefaultMQProducerImpl#start

1 2 3 4 5 6 7 8 9 10 11 12 boolean registerOK = mQClientFactory.registerProducer(this .defaultMQProducer.getProducerGroup(), this );if (!registerOK) { this .serviceState = ServiceState.CREATE_JUST; throw new MQClientException("The producer group[" + this .defaultMQProducer.getProducerGroup() + "] has been created before, specify another name please." + FAQUrl.suggestTodo(FAQUrl.GROUP_NAME_DUPLICATE_URL), null ); } if (startFactory) { mQClientFactory.start(); }

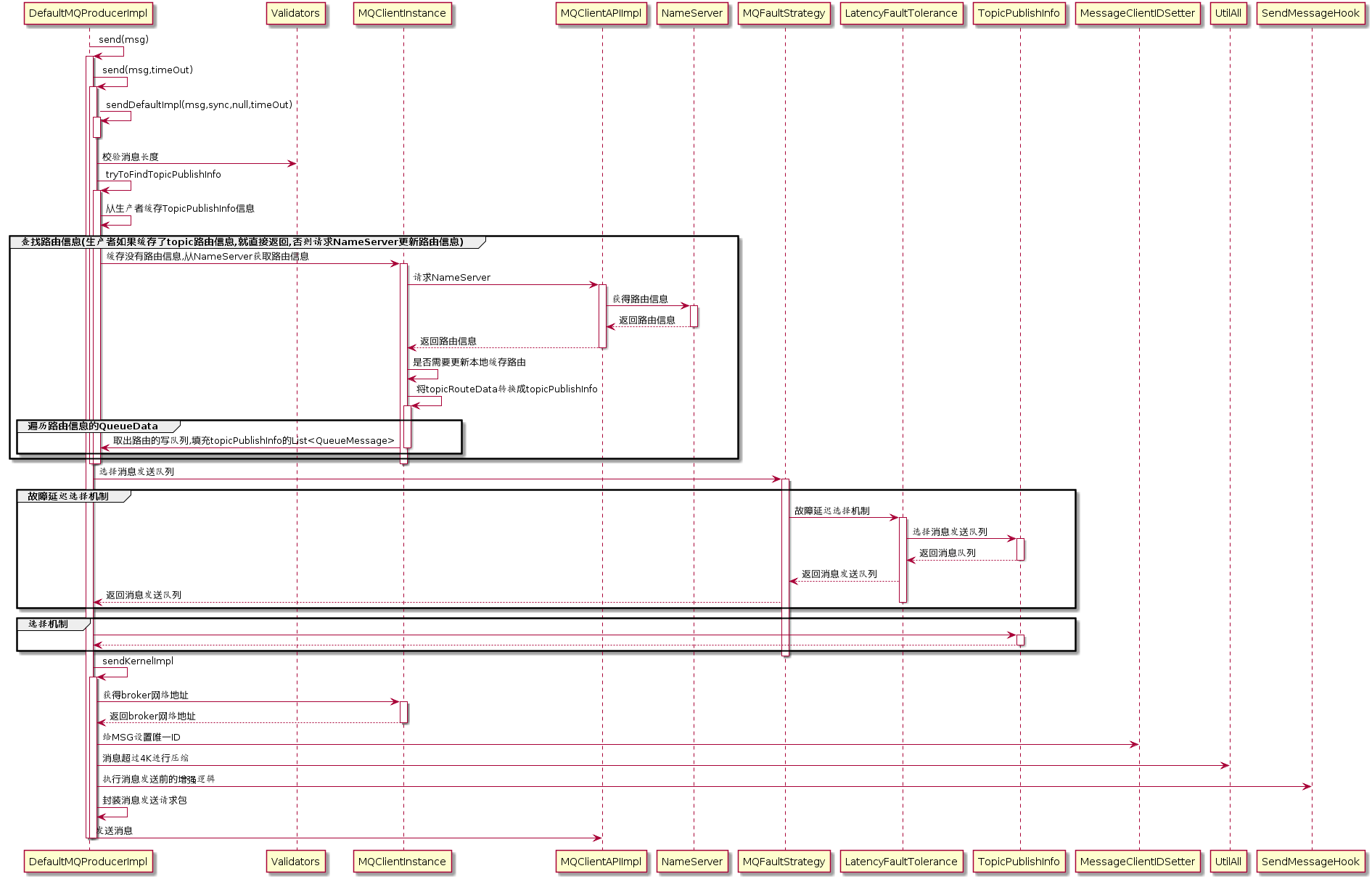

消息发送

代码:DefaultMQProducerImpl#send(Message msg)

1 2 3 4 public SendResult send (Message msg) return send(msg, this .defaultMQProducer.getSendMsgTimeout()); }

代码:DefaultMQProducerImpl#send(Message msg,long timeout)

1 2 3 4 public SendResult send (Message msg,long timeout) return this .sendDefaultImpl(msg, CommunicationMode.SYNC, null , timeout); }

代码:DefaultMQProducerImpl#sendDefaultImpl

1 2 Validators.checkMessage(msg, this .defaultMQProducer);

验证消息 代码:Validators#checkMessage

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 public static void checkMessage (Message msg, DefaultMQProducer defaultMQProducer) throws MQClientException { if (null == msg) { throw new MQClientException(ResponseCode.MESSAGE_ILLEGAL, "the message is null" ); } Validators.checkTopic(msg.getTopic()); if (null == msg.getBody()) { throw new MQClientException(ResponseCode.MESSAGE_ILLEGAL, "the message body is null" ); } if (0 == msg.getBody().length) { throw new MQClientException(ResponseCode.MESSAGE_ILLEGAL, "the message body length is zero" ); } if (msg.getBody().length > defaultMQProducer.getMaxMessageSize()) { throw new MQClientException(ResponseCode.MESSAGE_ILLEGAL, "the message body size over max value, MAX: " + defaultMQProducer.getMaxMessageSize()); } }

查找路由 代码:DefaultMQProducerImpl#tryToFindTopicPublishInfo

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 private TopicPublishInfo tryToFindTopicPublishInfo (final String topic) TopicPublishInfo topicPublishInfo = this .topicPublishInfoTable.get(topic); if (null == topicPublishInfo || !topicPublishInfo.ok()) { this .topicPublishInfoTable.putIfAbsent(topic, new TopicPublishInfo()); this .mQClientFactory.updateTopicRouteInfoFromNameServer(topic); topicPublishInfo = this .topicPublishInfoTable.get(topic); } if (topicPublishInfo.isHaveTopicRouterInfo() || topicPublishInfo.ok()) { return topicPublishInfo; } else { this .mQClientFactory.updateTopicRouteInfoFromNameServer(topic, true , this .defaultMQProducer); topicPublishInfo = this .topicPublishInfoTable.get(topic); return topicPublishInfo; } }

代码:TopicPublishInfo

1 2 3 4 5 6 7 public class TopicPublishInfo private boolean orderTopic = false ; private boolean haveTopicRouterInfo = false ; private List<MessageQueue> messageQueueList = new ArrayList<MessageQueue>(); private volatile ThreadLocalIndex sendWhichQueue = new ThreadLocalIndex(); private TopicRouteData topicRouteData; }

代码:MQClientInstance#updateTopicRouteInfoFromNameServer

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 TopicRouteData topicRouteData; if (isDefault && defaultMQProducer != null ) { topicRouteData = this .mQClientAPIImpl.getDefaultTopicRouteInfoFromNameServer(defaultMQProducer.getCreateTopicKey(), 1000 * 3 ); if (topicRouteData != null ) { for (QueueData data : topicRouteData.getQueueDatas()) { int queueNums = Math.min(defaultMQProducer.getDefaultTopicQueueNums(), data.getReadQueueNums()); data.setReadQueueNums(queueNums); data.setWriteQueueNums(queueNums); } } } else { topicRouteData = this .mQClientAPIImpl.getTopicRouteInfoFromNameServer(topic, 1000 * 3 ); }

代码:MQClientInstance#updateTopicRouteInfoFromNameServer

1 2 3 4 5 6 7 8 TopicRouteData old = this .topicRouteTable.get(topic); boolean changed = topicRouteDataIsChange(old, topicRouteData);if (!changed) { changed = this .isNeedUpdateTopicRouteInfo(topic); } else { log.info("the topic[{}] route info changed, old[{}] ,new[{}]" , topic, old, topicRouteData); }

代码:MQClientInstance#updateTopicRouteInfoFromNameServer

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 if (changed) { TopicPublishInfo publishInfo = topicRouteData2TopicPublishInfo(topic, topicRouteData); publishInfo.setHaveTopicRouterInfo(true ); Iterator<Entry<String, MQProducerInner>> it = this .producerTable.entrySet().iterator(); while (it.hasNext()) { Entry<String, MQProducerInner> entry = it.next(); MQProducerInner impl = entry.getValue(); if (impl != null ) { impl.updateTopicPublishInfo(topic, publishInfo); } } }

代码:MQClientInstance#topicRouteData2TopicPublishInfo

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 public static TopicPublishInfo topicRouteData2TopicPublishInfo (final String topic, final TopicRouteData route) TopicPublishInfo info = new TopicPublishInfo(); info.setTopicRouteData(route); if (route.getOrderTopicConf() != null && route.getOrderTopicConf().length() > 0 ) { String[] brokers = route.getOrderTopicConf().split(";" ); for (String broker : brokers) { String[] item = broker.split(":" ); int nums = Integer.parseInt(item[1 ]); for (int i = 0 ; i < nums; i++) { MessageQueue mq = new MessageQueue(topic, item[0 ], i); info.getMessageQueueList().add(mq); } } info.setOrderTopic(true ); } else { List<QueueData> qds = route.getQueueDatas(); Collections.sort(qds); for (QueueData qd : qds) { if (PermName.isWriteable(qd.getPerm())) { BrokerData brokerData = null ; for (BrokerData bd : route.getBrokerDatas()) { if (bd.getBrokerName().equals(qd.getBrokerName())) { brokerData = bd; break ; } } if (null == brokerData) { continue ; } if (!brokerData.getBrokerAddrs().containsKey(MixAll.MASTER_ID)) { continue ; } for (int i = 0 ; i < qd.getWriteQueueNums(); i++) { MessageQueue mq = new MessageQueue(topic, qd.getBrokerName(), i); info.getMessageQueueList().add(mq); } } } info.setOrderTopic(false ); } return info; }

选择队列

代码:TopicPublishInfo#selectOneMessageQueue(lastBrokerName)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 public MessageQueue selectOneMessageQueue (final String lastBrokerName) if (lastBrokerName == null ) { return selectOneMessageQueue(); } else { int index = this .sendWhichQueue.getAndIncrement(); for (int i = 0 ; i < this .messageQueueList.size(); i++) { int pos = Math.abs(index++) % this .messageQueueList.size(); if (pos < 0 ) pos = 0 ; MessageQueue mq = this .messageQueueList.get(pos); if (!mq.getBrokerName().equals(lastBrokerName)) { return mq; } } return selectOneMessageQueue(); } }

代码:TopicPublishInfo#selectOneMessageQueue()

1 2 3 4 5 6 7 8 9 10 11 public MessageQueue selectOneMessageQueue () int index = this .sendWhichQueue.getAndIncrement(); int pos = Math.abs(index) % this .messageQueueList.size(); if (pos < 0 ) pos = 0 ; return this .messageQueueList.get(pos); }

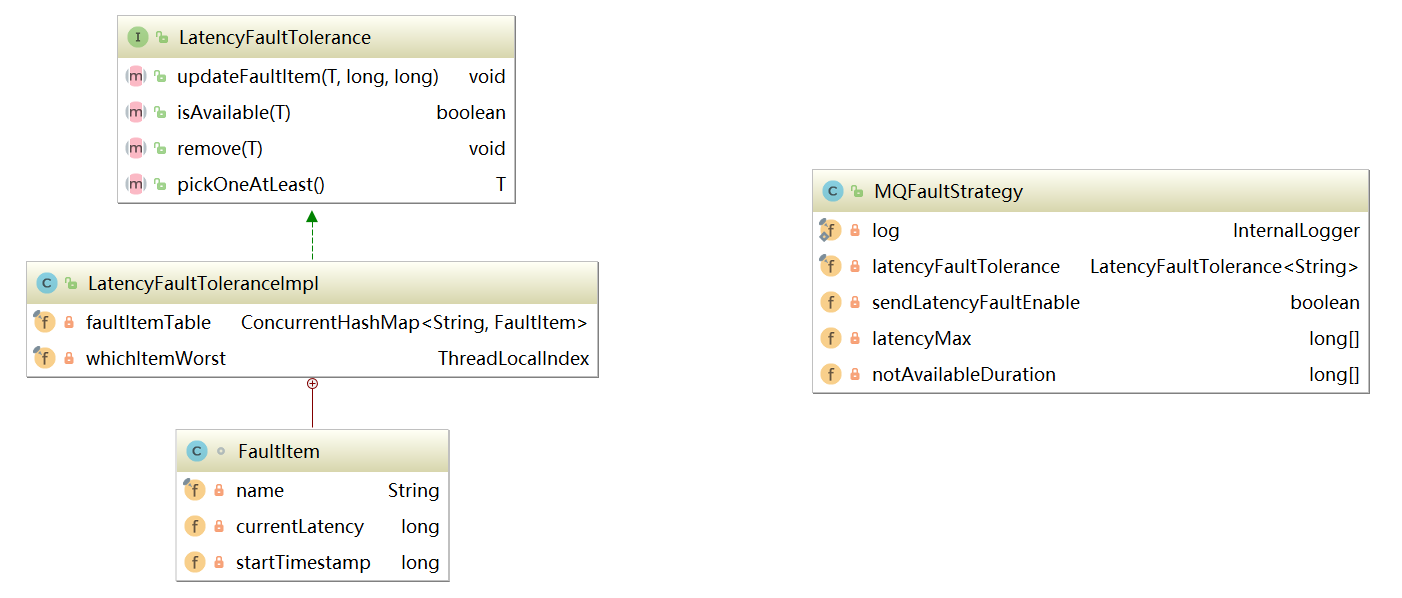

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 public MessageQueue selectOneMessageQueue (final TopicPublishInfo tpInfo, final String lastBrokerName) if (this .sendLatencyFaultEnable) { try { int index = tpInfo.getSendWhichQueue().getAndIncrement(); for (int i = 0 ; i < tpInfo.getMessageQueueList().size(); i++) { int pos = Math.abs(index++) % tpInfo.getMessageQueueList().size(); if (pos < 0 ) pos = 0 ; MessageQueue mq = tpInfo.getMessageQueueList().get(pos); if (latencyFaultTolerance.isAvailable(mq.getBrokerName())) { if (null == lastBrokerName || mq.getBrokerName().equals(lastBrokerName)) return mq; } } final String notBestBroker = latencyFaultTolerance.pickOneAtLeast(); int writeQueueNums = tpInfo.getQueueIdByBroker(notBestBroker); if (writeQueueNums > 0 ) { final MessageQueue mq = tpInfo.selectOneMessageQueue(); if (notBestBroker != null ) { mq.setBrokerName(notBestBroker); mq.setQueueId(tpInfo.getSendWhichQueue().getAndIncrement() % writeQueueNums); } return mq; } else { latencyFaultTolerance.remove(notBestBroker); } } catch (Exception e) { log.error("Error occurred when selecting message queue" , e); } return tpInfo.selectOneMessageQueue(); } return tpInfo.selectOneMessageQueue(lastBrokerName); }

1 2 3 4 5 6 7 8 9 10 public interface LatencyFaultTolerance <T > void updateFaultItem (final T name, final long currentLatency, final long notAvailableDuration) boolean isAvailable (final T name) void remove (final T name) T pickOneAtLeast () ; }

1 2 3 4 5 6 7 8 class FaultItem implements Comparable <FaultItem > private final String name; private volatile long currentLatency; private volatile long startTimestamp; }

1 2 3 4 5 6 public class MQFaultStrategy private long [] latencyMax = {50L , 100L , 550L , 1000L , 2000L , 3000L , 15000L }; private long [] notAvailableDuration = {0L , 0L , 30000L , 60000L , 120000L , 180000L , 600000L }; }

原理分析

代码:DefaultMQProducerImpl#sendDefaultImpl

1 2 3 4 5 6 7 8 sendResult = this .sendKernelImpl(msg, mq, communicationMode, sendCallback, topicPublishInfo, timeout - costTime); endTimestamp = System.currentTimeMillis(); this .updateFaultItem(mq.getBrokerName(), endTimestamp - beginTimestampPrev, false );

如果上述发送过程出现异常,则调用DefaultMQProducerImpl#updateFaultItem

1 2 3 4 5 6 public void updateFaultItem (final String brokerName, final long currentLatency, boolean isolation) this .mqFaultStrategy.updateFaultItem(brokerName, currentLatency, isolation); }

代码:MQFaultStrategy#updateFaultItem

1 2 3 4 5 6 7 8 public void updateFaultItem (final String brokerName, final long currentLatency, boolean isolation) if (this .sendLatencyFaultEnable) { long duration = computeNotAvailableDuration(isolation ? 30000 : currentLatency); this .latencyFaultTolerance.updateFaultItem(brokerName, currentLatency, duration); } }

代码:MQFaultStrategy#computeNotAvailableDuration

1 2 3 4 5 6 7 8 9 10 private long computeNotAvailableDuration (final long currentLatency) for (int i = latencyMax.length - 1 ; i >= 0 ; i--) { if (currentLatency >= latencyMax[i]) return this .notAvailableDuration[i]; } return 0 ; }

代码:LatencyFaultToleranceImpl#updateFaultItem

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 public void updateFaultItem (final String name, final long currentLatency, final long notAvailableDuration) FaultItem old = this .faultItemTable.get(name); if (null == old) { final FaultItem faultItem = new FaultItem(name); faultItem.setCurrentLatency(currentLatency); faultItem.setStartTimestamp(System.currentTimeMillis() + notAvailableDuration); old = this .faultItemTable.putIfAbsent(name, faultItem); if (old != null ) { old.setCurrentLatency(currentLatency); old.setStartTimestamp(System.currentTimeMillis() + notAvailableDuration); } } else { old.setCurrentLatency(currentLatency); old.setStartTimestamp(System.currentTimeMillis() + notAvailableDuration); } }

发送消息 消息发送API核心入口DefaultMQProducerImpl#sendKernelImpl

1 2 3 4 5 6 7 8 private SendResult sendKernelImpl ( final Message msg, //待发送消息 final MessageQueue mq, //消息发送队列 final CommunicationMode communicationMode, //消息发送内模式 final SendCallback sendCallback, pp //异步消息回调函数 final TopicPublishInfo topicPublishInfo, //主题路由信息 final long timeout //超时时间 )

代码:DefaultMQProducerImpl#sendKernelImpl

1 2 3 4 5 6 7 String brokerAddr = this .mQClientFactory.findBrokerAddressInPublish(mq.getBrokerName()); if (null == brokerAddr) { tryToFindTopicPublishInfo(mq.getTopic()); brokerAddr = this .mQClientFactory.findBrokerAddressInPublish(mq.getBrokerName()); }

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 if (!(msg instanceof MessageBatch)) { MessageClientIDSetter.setUniqID(msg); } boolean topicWithNamespace = false ;if (null != this .mQClientFactory.getClientConfig().getNamespace()) { msg.setInstanceId(this .mQClientFactory.getClientConfig().getNamespace()); topicWithNamespace = true ; } int sysFlag = 0 ;boolean msgBodyCompressed = false ;if (this .tryToCompressMessage(msg)) { sysFlag |= MessageSysFlag.COMPRESSED_FLAG; msgBodyCompressed = true ; } final String tranMsg = msg.getProperty(MessageConst.PROPERTY_TRANSACTION_PREPARED);if (tranMsg != null && Boolean.parseBoolean(tranMsg)) { sysFlag |= MessageSysFlag.TRANSACTION_PREPARED_TYPE; }

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 if (this .hasSendMessageHook()) { context = new SendMessageContext(); context.setProducer(this ); context.setProducerGroup(this .defaultMQProducer.getProducerGroup()); context.setCommunicationMode(communicationMode); context.setBornHost(this .defaultMQProducer.getClientIP()); context.setBrokerAddr(brokerAddr); context.setMessage(msg); context.setMq(mq); context.setNamespace(this .defaultMQProducer.getNamespace()); String isTrans = msg.getProperty(MessageConst.PROPERTY_TRANSACTION_PREPARED); if (isTrans != null && isTrans.equals("true" )) { context.setMsgType(MessageType.Trans_Msg_Half); } if (msg.getProperty("__STARTDELIVERTIME" ) != null || msg.getProperty(MessageConst.PROPERTY_DELAY_TIME_LEVEL) != null ) { context.setMsgType(MessageType.Delay_Msg); } this .executeSendMessageHookBefore(context); }

代码:SendMessageHook

1 2 3 4 5 6 7 public interface SendMessageHook String hookName () ; void sendMessageBefore (final SendMessageContext context) void sendMessageAfter (final SendMessageContext context) }

代码:DefaultMQProducerImpl#sendKernelImpl

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 SendMessageRequestHeader requestHeader = new SendMessageRequestHeader(); requestHeader.setProducerGroup(this .defaultMQProducer.getProducerGroup()); requestHeader.setTopic(msg.getTopic()); requestHeader.setDefaultTopic(this .defaultMQProducer.getCreateTopicKey()); requestHeader.setDefaultTopicQueueNums(this .defaultMQProducer.getDefaultTopicQueueNums()); requestHeader.setQueueId(mq.getQueueId()); requestHeader.setSysFlag(sysFlag); requestHeader.setBornTimestamp(System.currentTimeMillis()); requestHeader.setFlag(msg.getFlag()); requestHeader.setProperties(MessageDecoder.messageProperties2String(msg.getProperties())); requestHeader.setReconsumeTimes(0 ); requestHeader.setUnitMode(this .isUnitMode()); requestHeader.setBatch(msg instanceof MessageBatch); if (requestHeader.getTopic().startsWith(MixAll.RETRY_GROUP_TOPIC_PREFIX)) { String reconsumeTimes = MessageAccessor.getReconsumeTime(msg); if (reconsumeTimes != null ) { requestHeader.setReconsumeTimes(Integer.valueOf(reconsumeTimes)); MessageAccessor.clearProperty(msg, MessageConst.PROPERTY_RECONSUME_TIME); } String maxReconsumeTimes = MessageAccessor.getMaxReconsumeTimes(msg); if (maxReconsumeTimes != null ) { requestHeader.setMaxReconsumeTimes(Integer.valueOf(maxReconsumeTimes)); MessageAccessor.clearProperty(msg, MessageConst.PROPERTY_MAX_RECONSUME_TIMES); } }

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 case ASYNC: Message tmpMessage = msg; boolean messageCloned = false ; if (msgBodyCompressed) { tmpMessage = MessageAccessor.cloneMessage(msg); messageCloned = true ; msg.setBody(prevBody); } if (topicWithNamespace) { if (!messageCloned) { tmpMessage = MessageAccessor.cloneMessage(msg); messageCloned = true ; } msg.setTopic(NamespaceUtil.withoutNamespace(msg.getTopic(), this .defaultMQProducer.getNamespace())); } long costTimeAsync = System.currentTimeMillis() - beginStartTime; if (timeout < costTimeAsync) { throw new RemotingTooMuchRequestException("sendKernelImpl call timeout" ); } sendResult = this .mQClientFactory.getMQClientAPIImpl().sendMessage( brokerAddr, mq.getBrokerName(), tmpMessage, requestHeader, timeout - costTimeAsync, communicationMode, sendCallback, topicPublishInfo, this .mQClientFactory, this .defaultMQProducer.getRetryTimesWhenSendAsyncFailed(), context, this ); break ; case ONEWAY:case SYNC: long costTimeSync = System.currentTimeMillis() - beginStartTime; if (timeout < costTimeSync) { throw new RemotingTooMuchRequestException("sendKernelImpl call timeout" ); } sendResult = this .mQClientFactory.getMQClientAPIImpl().sendMessage( brokerAddr, mq.getBrokerName(), msg, requestHeader, timeout - costTimeSync, communicationMode, context, this ); break ; default : assert false ; break ; }

1 2 3 4 5 if (this .hasSendMessageHook()) { context.setSendResult(sendResult); this .executeSendMessageHookAfter(context); }

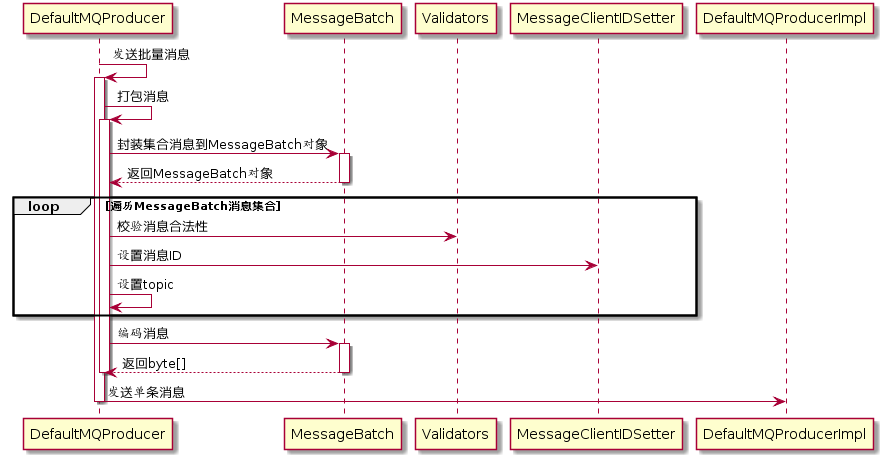

批量消息发送

批量消息发送是将同一个主题的多条消息一起打包发送到消息服务端,减少网络调用次数,提高网络传输效率。当然,并不是在同一批次中发送的消息数量越多越好,其判断依据是单条消息的长度,如果单条消息内容比较长,则打包多条消息发送会影响其他线程发送消息的响应时间,并且单批次消息总长度不能超过DefaultMQProducer#maxMessageSize。

批量消息发送要解决的问题是如何将这些消息编码以便服务端能够正确解码出每条消息的消息内容。

代码:DefaultMQProducer#send

1 2 3 4 5 public SendResult send (Collection<Message> msgs) throws MQClientException, RemotingException, MQBrokerException, InterruptedException { return this .defaultMQProducerImpl.send(batch(msgs)); }

代码:DefaultMQProducer#batch

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 private MessageBatch batch (Collection<Message> msgs) throws MQClientException MessageBatch msgBatch; try { msgBatch = MessageBatch.generateFromList(msgs); for (Message message : msgBatch) { Validators.checkMessage(message, this ); MessageClientIDSetter.setUniqID(message); message.setTopic(withNamespace(message.getTopic())); } msgBatch.setBody(msgBatch.encode()); } catch (Exception e) { throw new MQClientException("Failed to initiate the MessageBatch" , e); } msgBatch.setTopic(withNamespace(msgBatch.getTopic())); return msgBatch; }

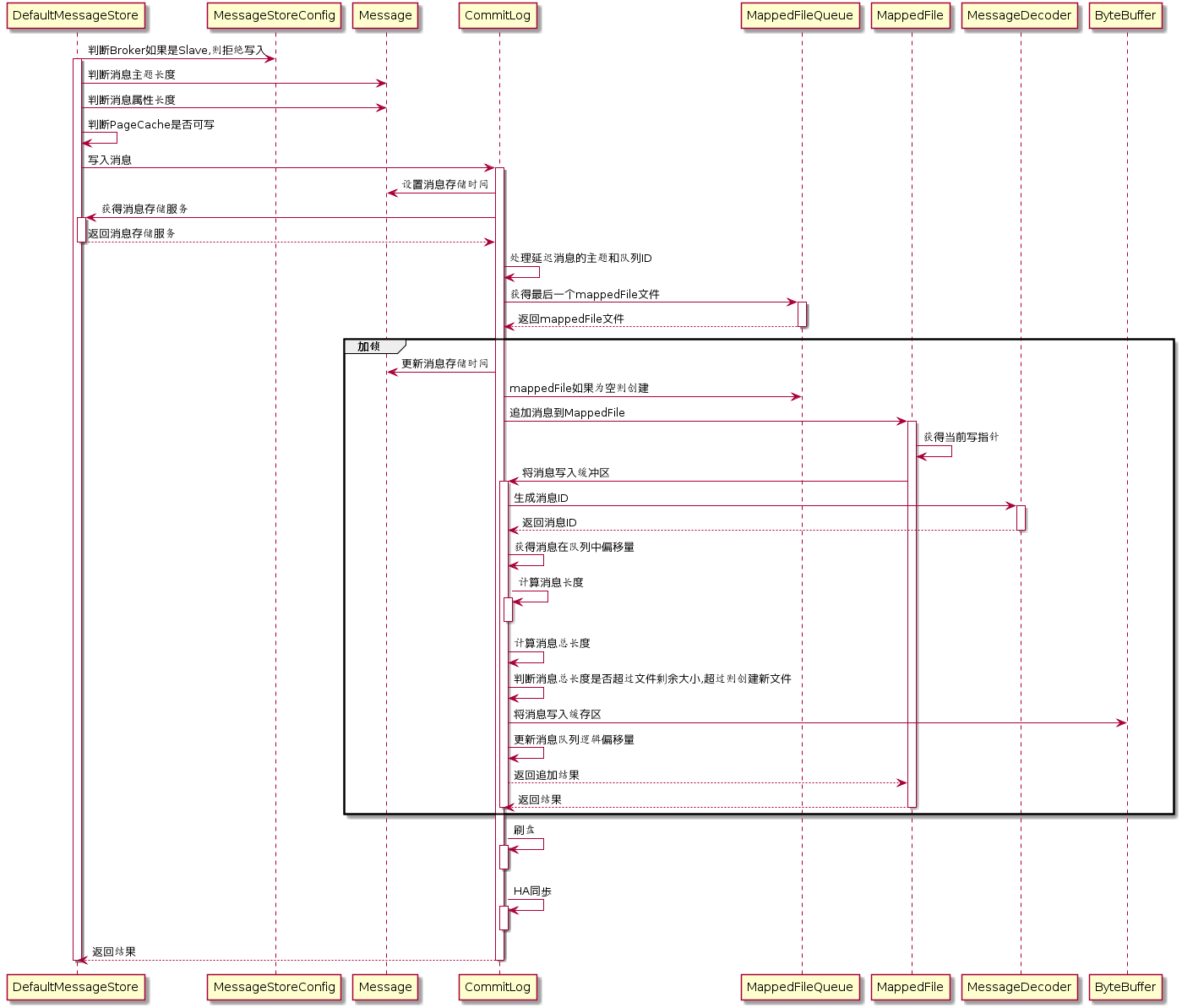

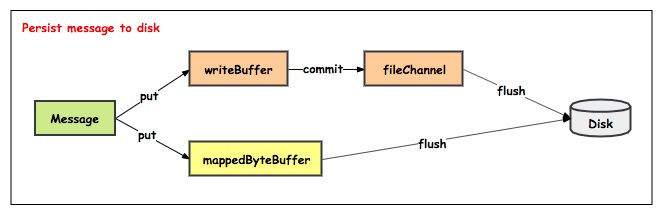

消息存储 消息存储核心类

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 private final MessageStoreConfig messageStoreConfig; private final CommitLog commitLog; private final ConcurrentMap<String, ConcurrentMap<Integer, ConsumeQueue>> consumeQueueTable; private final FlushConsumeQueueService flushConsumeQueueService; private final CleanCommitLogService cleanCommitLogService; private final CleanConsumeQueueService cleanConsumeQueueService; private final IndexService indexService; private final AllocateMappedFileService allocateMappedFileService; private final ReputMessageService reputMessageService;private final HAService haService; private final ScheduleMessageService scheduleMessageService; private final StoreStatsService storeStatsService; private final TransientStorePool transientStorePool; private final BrokerStatsManager brokerStatsManager; private final MessageArrivingListener messageArrivingListener; private final BrokerConfig brokerConfig; private StoreCheckpoint storeCheckpoint; private final LinkedList<CommitLogDispatcher> dispatcherList;

消息存储流程

消息存储入口:DefaultMessageStore#putMessage

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 if (BrokerRole.SLAVE == this .messageStoreConfig.getBrokerRole()) { long value = this .printTimes.getAndIncrement(); if ((value % 50000 ) == 0 ) { log.warn("message store is slave mode, so putMessage is forbidden " ); } return new PutMessageResult(PutMessageStatus.SERVICE_NOT_AVAILABLE, null ); } if (!this .runningFlags.isWriteable()) { long value = this .printTimes.getAndIncrement(); return new PutMessageResult(PutMessageStatus.SERVICE_NOT_AVAILABLE, null ); } else { this .printTimes.set(0 ); } if (msg.getTopic().length() > Byte.MAX_VALUE) { log.warn("putMessage message topic length too long " + msg.getTopic().length()); return new PutMessageResult(PutMessageStatus.MESSAGE_ILLEGAL, null ); } if (msg.getPropertiesString() != null && msg.getPropertiesString().length() > Short.MAX_VALUE) { log.warn("putMessage message properties length too long " + msg.getPropertiesString().length()); return new PutMessageResult(PutMessageStatus.PROPERTIES_SIZE_EXCEEDED, null ); } if (this .isOSPageCacheBusy()) { return new PutMessageResult(PutMessageStatus.OS_PAGECACHE_BUSY, null ); } PutMessageResult result = this .commitLog.putMessage(msg);

代码:CommitLog#putMessage

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 msg.setStoreTimestamp(beginLockTimestamp); if (null == mappedFile || mappedFile.isFull()) { mappedFile = this .mappedFileQueue.getLastMappedFile(0 ); } if (null == mappedFile) { log.error("create mapped file1 error, topic: " + msg.getTopic() + " clientAddr: " + msg.getBornHostString()); beginTimeInLock = 0 ; return new PutMessageResult(PutMessageStatus.CREATE_MAPEDFILE_FAILED, null ); } result = mappedFile.appendMessage(msg, this .appendMessageCallback);

代码:MappedFile#appendMessagesInner

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 int currentPos = this .wrotePosition.get();if (currentPos < this .fileSize) { ByteBuffer byteBuffer = writeBuffer != null ? writeBuffer.slice() : this .mappedByteBuffer.slice(); byteBuffer.position(currentPos); AppendMessageResult result = null ; if (messageExt instanceof MessageExtBrokerInner) { result = cb.doAppend(this .getFileFromOffset(), byteBuffer, this .fileSize - currentPos, (MessageExtBrokerInner) messageExt); } else if (messageExt instanceof MessageExtBatch) { result = cb.doAppend(this .getFileFromOffset(), byteBuffer, this .fileSize - currentPos, (MessageExtBatch) messageExt); } else { return new AppendMessageResult(AppendMessageStatus.UNKNOWN_ERROR); } this .wrotePosition.addAndGet(result.getWroteBytes()); this .storeTimestamp = result.getStoreTimestamp(); return result; }

代码:CommitLog#doAppend

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 long wroteOffset = fileFromOffset + byteBuffer.position();this .resetByteBuffer(hostHolder, 8 );String msgId = MessageDecoder.createMessageId(this .msgIdMemory, msgInner.getStoreHostBytes(hostHolder), wroteOffset); keyBuilder.setLength(0 ); keyBuilder.append(msgInner.getTopic()); keyBuilder.append('-' ); keyBuilder.append(msgInner.getQueueId()); String key = keyBuilder.toString(); Long queueOffset = CommitLog.this .topicQueueTable.get(key); if (null == queueOffset) { queueOffset = 0L ; CommitLog.this .topicQueueTable.put(key, queueOffset); } final byte [] propertiesData =msgInner.getPropertiesString() == null ? null : msgInner.getPropertiesString().getBytes(MessageDecoder.CHARSET_UTF8);final int propertiesLength = propertiesData == null ? 0 : propertiesData.length;if (propertiesLength > Short.MAX_VALUE) { log.warn("putMessage message properties length too long. length={}" , propertiesData.length); return new AppendMessageResult(AppendMessageStatus.PROPERTIES_SIZE_EXCEEDED); } final byte [] topicData = msgInner.getTopic().getBytes(MessageDecoder.CHARSET_UTF8);final int topicLength = topicData.length;final int bodyLength = msgInner.getBody() == null ? 0 : msgInner.getBody().length;final int msgLen = calMsgLength(bodyLength, topicLength, propertiesLength);

代码:CommitLog#calMsgLength

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 protected static int calMsgLength (int bodyLength, int topicLength, int propertiesLength) final int msgLen = 4 + 4 + 4 + 4 + 4 + 8 + 8 + 4 + 8 + 8 + 8 + 8 + 4 + 8 + 4 + (bodyLength > 0 ? bodyLength : 0 ) + 1 + topicLength + 2 + (propertiesLength > 0 ? propertiesLength : 0 ) + 0 ; return msgLen; }

代码:CommitLog#doAppend

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 if (msgLen > this .maxMessageSize) { CommitLog.log.warn("message size exceeded, msg total size: " + msgLen + ", msg body size: " + bodyLength + ", maxMessageSize: " + this .maxMessageSize); return new AppendMessageResult(AppendMessageStatus.MESSAGE_SIZE_EXCEEDED); } if ((msgLen + END_FILE_MIN_BLANK_LENGTH) > maxBlank) { this .resetByteBuffer(this .msgStoreItemMemory, maxBlank); this .msgStoreItemMemory.putInt(maxBlank); this .msgStoreItemMemory.putInt(CommitLog.BLANK_MAGIC_CODE); final long beginTimeMills = CommitLog.this .defaultMessageStore.now(); byteBuffer.put(this .msgStoreItemMemory.array(), 0 , maxBlank); return new AppendMessageResult(AppendMessageStatus.END_OF_FILE, wroteOffset, maxBlank, msgId, msgInner.getStoreTimestamp(), queueOffset, CommitLog.this .defaultMessageStore.now() - beginTimeMills); } final long beginTimeMills = CommitLog.this .defaultMessageStore.now();byteBuffer.put(this .msgStoreItemMemory.array(), 0 , msgLen); AppendMessageResult result = new AppendMessageResult(AppendMessageStatus.PUT_OK, wroteOffset, msgLen, msgId,msgInner.getStoreTimestamp(), queueOffset, CommitLog.this .defaultMessageStore.now() -beginTimeMills); switch (tranType) { case MessageSysFlag.TRANSACTION_PREPARED_TYPE: case MessageSysFlag.TRANSACTION_ROLLBACK_TYPE: break ; case MessageSysFlag.TRANSACTION_NOT_TYPE: case MessageSysFlag.TRANSACTION_COMMIT_TYPE: CommitLog.this .topicQueueTable.put(key, ++queueOffset); break ; default : break ; }

代码:CommitLog#putMessage

1 2 3 4 5 6 putMessageLock.unlock(); handleDiskFlush(result, putMessageResult, msg); handleHA(result, putMessageResult, msg);

存储文件

commitLog:消息存储目录

config:运行期间一些配置信息

consumerqueue:消息消费队列存储目录

index:消息索引文件存储目录

abort:如果存在改文件寿命Broker非正常关闭

checkpoint:文件检查点,存储CommitLog文件最后一次刷盘时间戳、consumerquueue最后一次刷盘时间,index索引文件最后一次刷盘时间戳。

存储文件内存映射 RocketMQ通过使用内存映射文件提高IO访问性能,无论是CommitLog、ConsumerQueue还是IndexFile,单个文件都被设计为固定长度,如果一个文件写满以后再创建一个新文件,文件名就为该文件第一条消息对应的全局物理偏移量。

MappedFileQueue

1 2 3 4 5 6 String storePath; int mappedFileSize; CopyOnWriteArrayList<MappedFile> mappedFiles; AllocateMappedFileService allocateMappedFileService; long flushedWhere = 0 ; long committedWhere = 0 ;

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 public MappedFile getMappedFileByTime (final long timestamp) Object[] mfs = this .copyMappedFiles(0 ); if (null == mfs) return null ; for (int i = 0 ; i < mfs.length; i++) { MappedFile mappedFile = (MappedFile) mfs[i]; if (mappedFile.getLastModifiedTimestamp() >= timestamp) { return mappedFile; } } return (MappedFile) mfs[mfs.length - 1 ]; }

根据消息偏移量offset查找MappedFile

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 public MappedFile findMappedFileByOffset (final long offset, final boolean returnFirstOnNotFound) try { MappedFile firstMappedFile = this .getFirstMappedFile(); MappedFile lastMappedFile = this .getLastMappedFile(); if (firstMappedFile != null && lastMappedFile != null ) { if (offset < firstMappedFile.getFileFromOffset() || offset >= lastMappedFile.getFileFromOffset() + this .mappedFileSize) { } else { int index = (int ) ((offset / this .mappedFileSize) - (firstMappedFile.getFileFromOffset() / this .mappedFileSize)); MappedFile targetFile = null ; try { targetFile = this .mappedFiles.get(index); } catch (Exception ignored) { } if (targetFile != null && offset >= targetFile.getFileFromOffset() && offset < targetFile.getFileFromOffset() + this .mappedFileSize) { return targetFile; } for (MappedFile tmpMappedFile : this .mappedFiles) { if (offset >= tmpMappedFile.getFileFromOffset() && offset < tmpMappedFile.getFileFromOffset() + this .mappedFileSize) { return tmpMappedFile; } } } if (returnFirstOnNotFound) { return firstMappedFile; } } } catch (Exception e) { log.error("findMappedFileByOffset Exception" , e); } return null ; }

1 2 3 4 5 6 7 8 9 10 11 12 13 public long getMinOffset () if (!this .mappedFiles.isEmpty()) { try { return this .mappedFiles.get(0 ).getFileFromOffset(); } catch (IndexOutOfBoundsException e) { } catch (Exception e) { log.error("getMinOffset has exception." , e); } } return -1 ; }

1 2 3 4 5 6 7 public long getMaxOffset () MappedFile mappedFile = getLastMappedFile(); if (mappedFile != null ) { return mappedFile.getFileFromOffset() + mappedFile.getReadPosition(); } return 0 ; }

1 2 3 4 5 6 7 public long getMaxWrotePosition () MappedFile mappedFile = getLastMappedFile(); if (mappedFile != null ) { return mappedFile.getFileFromOffset() + mappedFile.getWrotePosition(); } return 0 ; }

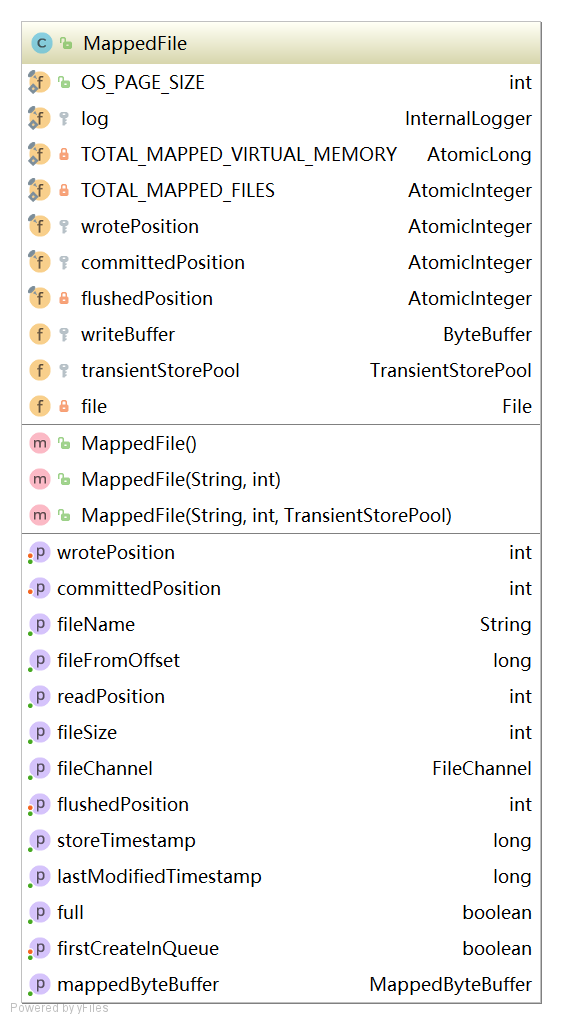

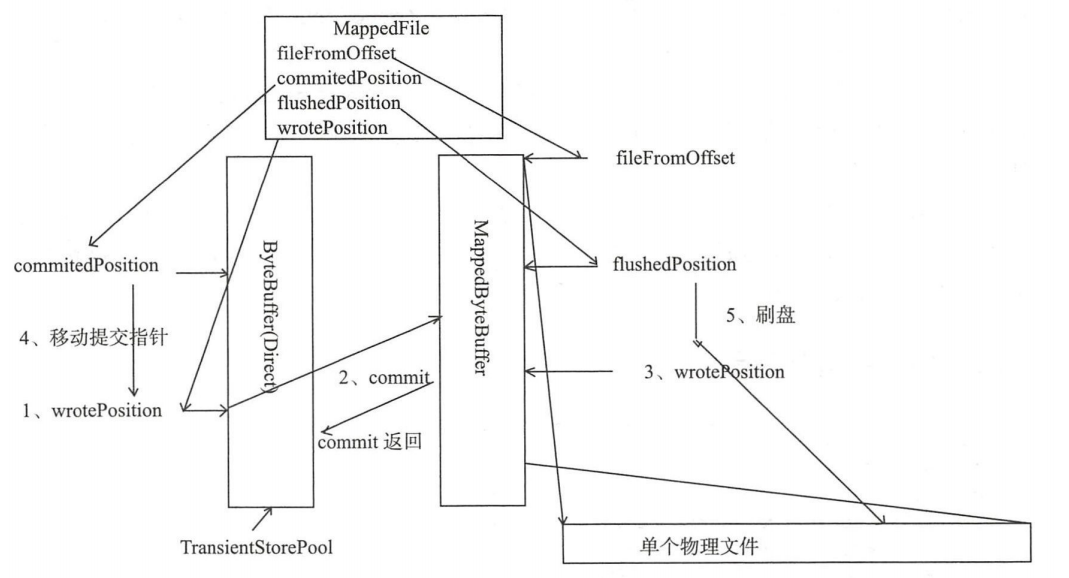

MappedFile

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 int OS_PAGE_SIZE = 1024 * 4 ; AtomicLong TOTAL_MAPPED_VIRTUAL_MEMORY = new AtomicLong(0 ); AtomicInteger TOTAL_MAPPED_FILES = new AtomicInteger(0 ); AtomicInteger wrotePosition = new AtomicInteger(0 ); AtomicInteger committedPosition = new AtomicInteger(0 ); AtomicInteger flushedPosition = new AtomicInteger(0 ); int fileSize; FileChannel fileChannel; ByteBuffer writeBuffer = null ; TransientStorePool transientStorePool = null ; String fileName; long fileFromOffset; File file; MappedByteBuffer mappedByteBuffer; volatile long storeTimestamp = 0 ; boolean firstCreateInQueue = false ;

MappedFile初始化

未开启transientStorePoolEnable。transientStorePoolEnable=true为true表示数据先存储到堆外内存,然后通过Commit线程将数据提交到内存映射Buffer中,再通过Flush线程将内存映射Buffer中数据持久化磁盘。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 private void init (final String fileName, final int fileSize) throws IOException this .fileName = fileName; this .fileSize = fileSize; this .file = new File(fileName); this .fileFromOffset = Long.parseLong(this .file.getName()); boolean ok = false ; ensureDirOK(this .file.getParent()); try { this .fileChannel = new RandomAccessFile(this .file, "rw" ).getChannel(); this .mappedByteBuffer = this .fileChannel.map(MapMode.READ_WRITE, 0 , fileSize); TOTAL_MAPPED_VIRTUAL_MEMORY.addAndGet(fileSize); TOTAL_MAPPED_FILES.incrementAndGet(); ok = true ; } catch (FileNotFoundException e) { log.error("create file channel " + this .fileName + " Failed. " , e); throw e; } catch (IOException e) { log.error("map file " + this .fileName + " Failed. " , e); throw e; } finally { if (!ok && this .fileChannel != null ) { this .fileChannel.close(); } } }

开启transientStorePoolEnable

1 2 3 4 5 6 public void init (final String fileName, final int fileSize, final TransientStorePool transientStorePool) throws IOException init(fileName, fileSize); this .writeBuffer = transientStorePool.borrowBuffer(); this .transientStorePool = transientStorePool; }

MappedFile提交

提交数据到FileChannel,commitLeastPages为本次提交最小的页数,如果待提交数据不满commitLeastPages,则不执行本次提交操作。如果writeBuffer如果为空,直接返回writePosition指针,无需执行commit操作,表名commit操作主体是writeBuffer。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 public int commit (final int commitLeastPages) if (writeBuffer == null ) { return this .wrotePosition.get(); } if (this .isAbleToCommit(commitLeastPages)) { if (this .hold()) { commit0(commitLeastPages); this .release(); } else { log.warn("in commit, hold failed, commit offset = " + this .committedPosition.get()); } } if (writeBuffer != null && this .transientStorePool != null && this .fileSize == this .committedPosition.get()) { this .transientStorePool.returnBuffer(writeBuffer); this .writeBuffer = null ; } return this .committedPosition.get(); }

MappedFile#isAbleToCommit

判断是否执行commit操作,如果文件已满返回true;如果commitLeastpages大于0,则比较writePosition与上一次提交的指针commitPosition的差值,除以OS_PAGE_SIZE得到当前脏页的数量,如果大于commitLeastPages则返回true,如果commitLeastpages小于0表示只要存在脏页就提交。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 protected boolean isAbleToCommit (final int commitLeastPages) int flush = this .committedPosition.get(); int write = this .wrotePosition.get(); if (this .isFull()) { return true ; } if (commitLeastPages > 0 ) { return ((write / OS_PAGE_SIZE) - (flush / OS_PAGE_SIZE)) >= commitLeastPages; } return write > flush; }

MappedFile#commit0

具体提交的实现,首先创建WriteBuffer区共享缓存区,然后将新创建的position回退到上一次提交的位置(commitPosition),设置limit为wrotePosition(当前最大有效数据指针),然后把commitPosition到wrotePosition的数据写入到FileChannel中,然后更新committedPosition指针为wrotePosition。commit的作用就是将MappedFile的writeBuffer中数据提交到文件通道FileChannel中。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 protected void commit0 (final int commitLeastPages) int writePos = this .wrotePosition.get(); int lastCommittedPosition = this .committedPosition.get(); if (writePos - this .committedPosition.get() > 0 ) { try { ByteBuffer byteBuffer = writeBuffer.slice(); byteBuffer.position(lastCommittedPosition); byteBuffer.limit(writePos); this .fileChannel.position(lastCommittedPosition); this .fileChannel.write(byteBuffer); this .committedPosition.set(writePos); } catch (Throwable e) { log.error("Error occurred when commit data to FileChannel." , e); } } }

MappedFile#flush

刷写磁盘,直接调用MappedByteBuffer或fileChannel的force方法将内存中的数据持久化到磁盘,那么flushedPosition应该等于MappedByteBuffer中的写指针;如果writeBuffer不为空,则flushPosition应该等于上一次的commit指针;因为上一次提交的数据就是进入到MappedByteBuffer中的数据;如果writeBuffer为空,数据时直接进入到MappedByteBuffer,wrotePosition代表的是MappedByteBuffer中的指针,故设置flushPosition为wrotePosition。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 public int flush (final int flushLeastPages) if (this .isAbleToFlush(flushLeastPages)) { if (this .hold()) { int value = getReadPosition(); try { if (writeBuffer != null || this .fileChannel.position() != 0 ) { this .fileChannel.force(false ); } else { this .mappedByteBuffer.force(); } } catch (Throwable e) { log.error("Error occurred when force data to disk." , e); } this .flushedPosition.set(value); this .release(); } else { log.warn("in flush, hold failed, flush offset = " + this .flushedPosition.get()); this .flushedPosition.set(getReadPosition()); } } return this .getFlushedPosition(); }

MappedFile#getReadPosition

获取当前文件最大可读指针。如果writeBuffer为空,则直接返回当前的写指针;如果writeBuffer不为空,则返回上一次提交的指针。在MappedFile设置中,只有提交了的数据(写入到MappedByteBuffer或FileChannel中的数据)才是安全的数据

1 2 3 4 public int getReadPosition () return this .writeBuffer == null ? this .wrotePosition.get() : this .committedPosition.get(); }

MappedFile#selectMappedBuffer

查找pos到当前最大可读之间的数据,由于在整个写入期间都未曾改MappedByteBuffer的指针,如果mappedByteBuffer.slice()方法返回的共享缓存区空间为整个MappedFile,然后通过设置ByteBuffer的position为待查找的值,读取字节长度当前可读最大长度,最终返回的ByteBuffer的limit为size。整个共享缓存区的容量为(MappedFile#fileSize-pos)。故在操作SelectMappedBufferResult不能对包含在里面的ByteBuffer调用filp方法。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 public SelectMappedBufferResult selectMappedBuffer (int pos) int readPosition = getReadPosition(); if (pos < readPosition && pos >= 0 ) { if (this .hold()) { ByteBuffer byteBuffer = this .mappedByteBuffer.slice(); byteBuffer.position(pos); int size = readPosition - pos; ByteBuffer byteBufferNew = byteBuffer.slice(); byteBufferNew.limit(size); return new SelectMappedBufferResult(this .fileFromOffset + pos, byteBufferNew, size, this ); } } return null ; }

MappedFile#shutdown

MappedFile文件销毁的实现方法为public boolean destory(long intervalForcibly),intervalForcibly表示拒绝被销毁的最大存活时间。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 public void shutdown (final long intervalForcibly) if (this .available) { this .available = false ; this .firstShutdownTimestamp = System.currentTimeMillis(); this .release(); } else if (this .getRefCount() > 0 ) { if ((System.currentTimeMillis() - this .firstShutdownTimestamp) >= intervalForcibly) { this .refCount.set(-1000 - this .getRefCount()); this .release(); } } }

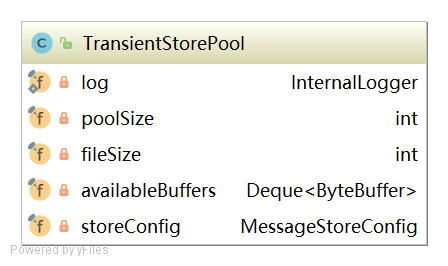

TransientStorePool 短暂的存储池。RocketMQ单独创建一个MappedByteBuffer内存缓存池,用来临时存储数据,数据先写入该内存映射中,然后由commit线程定时将数据从该内存复制到与目标物理文件对应的内存映射中。RocketMQ引入该机制主要的原因是提供一种内存锁定,将当前堆外内存一直锁定在内存中,避免被进程将内存交换到磁盘。

1 2 3 private final int poolSize; private final int fileSize; private final Deque<ByteBuffer> availableBuffers;

初始化

1 2 3 4 5 6 7 8 9 10 11 12 public void init () for (int i = 0 ; i < poolSize; i++) { ByteBuffer byteBuffer = ByteBuffer.allocateDirect(fileSize); final long address = ((DirectBuffer) byteBuffer).address(); Pointer pointer = new Pointer(address); LibC.INSTANCE.mlock(pointer, new NativeLong(fileSize)); availableBuffers.offer(byteBuffer); } }

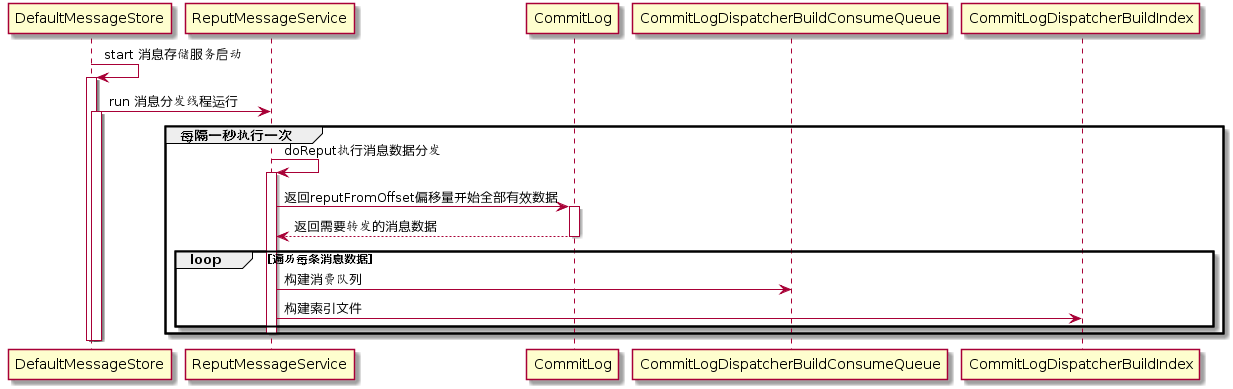

实时更新消息消费队列与索引文件 消息消费队文件、消息属性索引文件都是基于CommitLog文件构建的,当消息生产者提交的消息存储在CommitLog文件中,ConsumerQueue、IndexFile需要及时更新,否则消息无法及时被消费,根据消息属性查找消息也会出现较大延迟。RocketMQ通过开启一个线程ReputMessageService来准实时转发CommitLog文件更新事件,相应的任务处理器根据转发的消息及时更新ConsumerQueue、IndexFile文件。

代码:DefaultMessageStore:start

1 2 3 4 this .reputMessageService.setReputFromOffset(maxPhysicalPosInLogicQueue);this .reputMessageService.start();

代码:DefaultMessageStore:run

1 2 3 4 5 6 7 8 9 10 11 12 13 14 public void run () DefaultMessageStore.log.info(this .getServiceName() + " service started" ); while (!this .isStopped()) { try { Thread.sleep(1 ); this .doReput(); } catch (Exception e) { DefaultMessageStore.log.warn(this .getServiceName() + " service has exception. " , e); } } DefaultMessageStore.log.info(this .getServiceName() + " service end" ); }

代码:DefaultMessageStore:deReput

1 2 3 4 5 6 7 8 9 10 11 for (int readSize = 0 ; readSize < result.getSize() && doNext; ) { DispatchRequest dispatchRequest = DefaultMessageStore.this .commitLog.checkMessageAndReturnSize(result.getByteBuffer(), false , false ); int size = dispatchRequest.getBufferSize() == -1 ? dispatchRequest.getMsgSize() : dispatchRequest.getBufferSize(); if (dispatchRequest.isSuccess()) { if (size > 0 ) { DefaultMessageStore.this .doDispatch(dispatchRequest); } } }

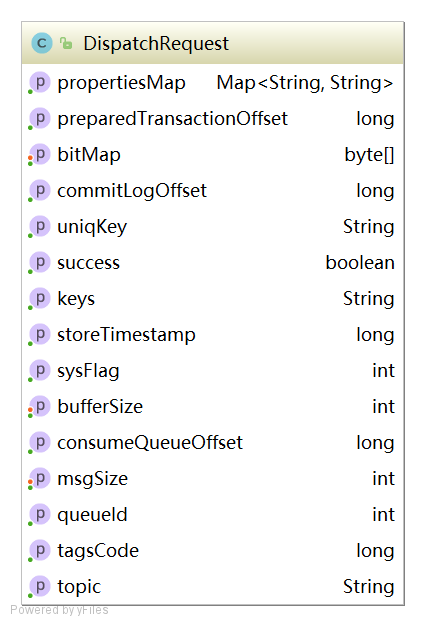

DispatchRequest

1 2 3 4 5 6 7 8 9 10 11 12 13 14 String topic; int queueId; long commitLogOffset; int msgSize; long tagsCode; long storeTimestamp; long consumeQueueOffset; String keys; boolean success; String uniqKey; int sysFlag; long preparedTransactionOffset; Map<String, String> propertiesMap; byte [] bitMap;

转发到ConsumerQueue

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 class CommitLogDispatcherBuildConsumeQueue implements CommitLogDispatcher @Override public void dispatch (DispatchRequest request) final int tranType = MessageSysFlag.getTransactionValue(request.getSysFlag()); switch (tranType) { case MessageSysFlag.TRANSACTION_NOT_TYPE: case MessageSysFlag.TRANSACTION_COMMIT_TYPE: DefaultMessageStore.this .putMessagePositionInfo(request); break ; case MessageSysFlag.TRANSACTION_PREPARED_TYPE: case MessageSysFlag.TRANSACTION_ROLLBACK_TYPE: break ; } } }

代码:DefaultMessageStore#putMessagePositionInfo

1 2 3 4 5 6 public void putMessagePositionInfo (DispatchRequest dispatchRequest) ConsumeQueue cq = this .findConsumeQueue(dispatchRequest.getTopic(), dispatchRequest.getQueueId()); cq.putMessagePositionInfoWrapper(dispatchRequest); }

代码:DefaultMessageStore#putMessagePositionInfo

1 2 3 4 5 6 7 8 9 10 11 12 this .byteBufferIndex.flip();this .byteBufferIndex.limit(CQ_STORE_UNIT_SIZE);this .byteBufferIndex.putLong(offset);this .byteBufferIndex.putInt(size);this .byteBufferIndex.putLong(tagsCode);MappedFile mappedFile = this .mappedFileQueue.getLastMappedFile(expectLogicOffset); if (mappedFile != null ) { return mappedFile.appendMessage(this .byteBufferIndex.array()); }

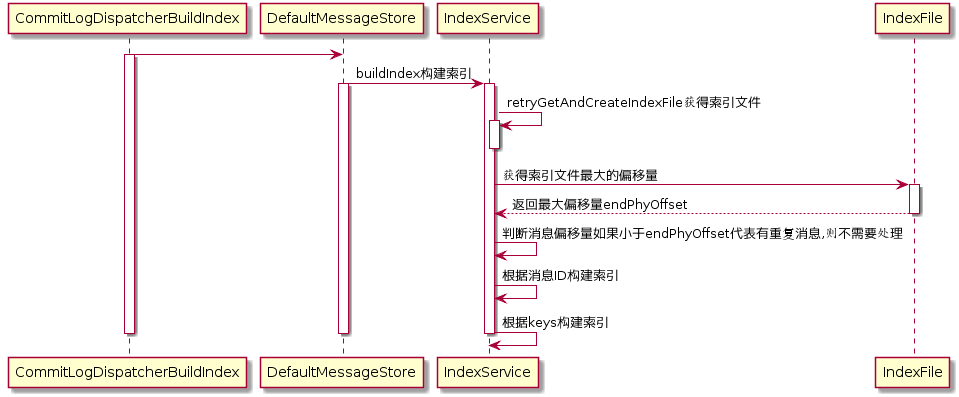

转发到Index

1 2 3 4 5 6 7 8 9 class CommitLogDispatcherBuildIndex implements CommitLogDispatcher @Override public void dispatch (DispatchRequest request) if (DefaultMessageStore.this .messageStoreConfig.isMessageIndexEnable()) { DefaultMessageStore.this .indexService.buildIndex(request); } } }

代码:DefaultMessageStore#buildIndex

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 public void buildIndex (DispatchRequest req) IndexFile indexFile = retryGetAndCreateIndexFile(); if (indexFile != null ) { long endPhyOffset = indexFile.getEndPhyOffset(); DispatchRequest msg = req; String topic = msg.getTopic(); String keys = msg.getKeys(); if (msg.getCommitLogOffset() < endPhyOffset) { return ; } final int tranType = MessageSysFlag.getTransactionValue(msg.getSysFlag()); switch (tranType) { case MessageSysFlag.TRANSACTION_NOT_TYPE: case MessageSysFlag.TRANSACTION_PREPARED_TYPE: case MessageSysFlag.TRANSACTION_COMMIT_TYPE: break ; case MessageSysFlag.TRANSACTION_ROLLBACK_TYPE: return ; } if (req.getUniqKey() != null ) { indexFile = putKey(indexFile, msg, buildKey(topic, req.getUniqKey())); if (indexFile == null ) { return ; } } if (keys != null && keys.length() > 0 ) { String[] keyset = keys.split(MessageConst.KEY_SEPARATOR); for (int i = 0 ; i < keyset.length; i++) { String key = keyset[i]; if (key.length() > 0 ) { indexFile = putKey(indexFile, msg, buildKey(topic, key)); if (indexFile == null ) { return ; } } } } } else { log.error("build index error, stop building index" ); } }

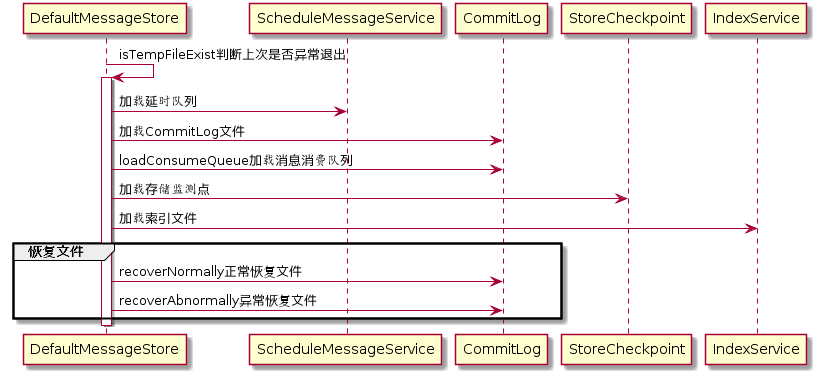

消息队列和索引文件恢复 由于RocketMQ存储首先将消息全量存储在CommitLog文件中,然后异步生成转发任务更新ConsumerQueue和Index文件。如果消息成功存储到CommitLog文件中,转发任务未成功执行,此时消息服务器Broker由于某个愿意宕机,导致CommitLog、ConsumerQueue、IndexFile文件数据不一致。如果不加以人工修复的话,会有一部分消息即便在CommitLog中文件中存在,但由于没有转发到ConsumerQueue,这部分消息将永远复发被消费者消费。

存储文件加载 代码:DefaultMessageStore#load

判断上一次是否异常退出。实现机制是Broker在启动时创建abort文件,在退出时通过JVM钩子函数删除abort文件。如果下次启动时存在abort文件。说明Broker时异常退出的,CommitLog与ConsumerQueue数据有可能不一致,需要进行修复。

1 2 3 4 5 6 7 8 9 boolean lastExitOK = !this .isTempFileExist();private boolean isTempFileExist () String fileName = StorePathConfigHelper .getAbortFile(this .messageStoreConfig.getStorePathRootDir()); File file = new File(fileName); return file.exists(); }

代码:DefaultMessageStore#load

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 if (null != scheduleMessageService) { result = result && this .scheduleMessageService.load(); } result = result && this .commitLog.load(); result = result && this .loadConsumeQueue(); if (result) { this .storeCheckpoint =new StoreCheckpoint(StorePathConfigHelper.getStoreCheckpoint(this .messageStoreConfig.getStorePathRootDir())); this .indexService.load(lastExitOK); this .recover(lastExitOK); }

代码:MappedFileQueue#load

加载CommitLog到映射文件

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 File dir = new File(this .storePath); File[] files = dir.listFiles(); if (files != null ) { Arrays.sort(files); for (File file : files) { if (file.length() != this .mappedFileSize) { return false ; } try { MappedFile mappedFile = new MappedFile(file.getPath(), mappedFileSize); mappedFile.setWrotePosition(this .mappedFileSize); mappedFile.setFlushedPosition(this .mappedFileSize); mappedFile.setCommittedPosition(this .mappedFileSize); this .mappedFiles.add(mappedFile); log.info("load " + file.getPath() + " OK" ); } catch (IOException e) { log.error("load file " + file + " error" , e); return false ; } } } return true ;

代码:DefaultMessageStore#loadConsumeQueue

加载消息消费队列

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 File dirLogic = new File(StorePathConfigHelper.getStorePathConsumeQueue(this .messageStoreConfig.getStorePathRootDir())); File[] fileTopicList = dirLogic.listFiles(); if (fileTopicList != null ) { for (File fileTopic : fileTopicList) { String topic = fileTopic.getName(); File[] fileQueueIdList = fileTopic.listFiles(); if (fileQueueIdList != null ) { for (File fileQueueId : fileQueueIdList) { int queueId; try { queueId = Integer.parseInt(fileQueueId.getName()); } catch (NumberFormatException e) { continue ; } ConsumeQueue logic = new ConsumeQueue( topic, queueId, StorePathConfigHelper.getStorePathConsumeQueue(this .messageStoreConfig.getStorePathRootDir()), this .getMessageStoreConfig().getMapedFileSizeConsumeQueue(), this ); this .putConsumeQueue(topic, queueId, logic); if (!logic.load()) { return false ; } } } } } log.info("load logics queue all over, OK" ); return true ;

代码:IndexService#load

加载索引文件

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 public boolean load (final boolean lastExitOK) File dir = new File(this .storePath); File[] files = dir.listFiles(); if (files != null ) { Arrays.sort(files); for (File file : files) { try { IndexFile f = new IndexFile(file.getPath(), this .hashSlotNum, this .indexNum, 0 , 0 ); f.load(); if (!lastExitOK) { if (f.getEndTimestamp() > this .defaultMessageStore.getStoreCheckpoint() .getIndexMsgTimestamp()) { f.destroy(0 ); continue ; } } log.info("load index file OK, " + f.getFileName()); this .indexFileList.add(f); } catch (IOException e) { log.error("load file {} error" , file, e); return false ; } catch (NumberFormatException e) { log.error("load file {} error" , file, e); } } } return true ; }

代码:DefaultMessageStore#recover

文件恢复,根据Broker是否正常退出执行不同的恢复策略

1 2 3 4 5 6 7 8 9 10 11 12 13 14 private void recover (final boolean lastExitOK) long maxPhyOffsetOfConsumeQueue = this .recoverConsumeQueue(); if (lastExitOK) { this .commitLog.recoverNormally(maxPhyOffsetOfConsumeQueue); } else { this .commitLog.recoverAbnormally(maxPhyOffsetOfConsumeQueue); } this .recoverTopicQueueTable(); }

代码:DefaultMessageStore#recoverTopicQueueTable

恢复ConsumerQueue后,将在CommitLog实例中保存每隔消息队列当前的存储逻辑偏移量,这也是消息中不仅存储主题、消息队列ID、还存储了消息队列的关键所在。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 public void recoverTopicQueueTable () HashMap<String, Long> table = new HashMap<String, Long>(1024 ); long minPhyOffset = this .commitLog.getMinOffset(); for (ConcurrentMap<Integer, ConsumeQueue> maps : this .consumeQueueTable.values()) { for (ConsumeQueue logic : maps.values()) { String key = logic.getTopic() + "-" + logic.getQueueId(); table.put(key, logic.getMaxOffsetInQueue()); logic.correctMinOffset(minPhyOffset); } } this .commitLog.setTopicQueueTable(table); }

正常恢复 代码:CommitLog#recoverNormally